Navigating the shift from manual keyword bidding to algorithmic automation requires a level of strategic oversight that many seasoned professionals initially found daunting in the high-stakes world of enterprise software sales. The transition toward Google’s Performance Max (PMax) represents a departure from the granular control that once defined search engine marketing. In the current landscape, the complexity of a professional sales cycle necessitates a departure from standard automation settings. Instead of a passive reliance on machine learning, success now demands that marketers evolve into strategic directors who provide the high-fidelity signals required to steer a multifaceted campaign.

The importance of this evolution cannot be overstated, as the margin for error in business-to-business (B2B) sectors is significantly thinner than in retail environments. Misallocated spend in a high-ticket industry does not just lead to lower margins; it can result in a pipeline filled with junk leads that waste the time of highly paid sales representatives. Consequently, the narrative surrounding PMax has shifted from a discussion about “set it and forget it” features to a rigorous exploration of how human expertise can refine artificial intelligence (AI). This necessitates a deep dive into the technical and strategic layers that transform a generic automation tool into a precision instrument for growth.

Beyond the Black Box: Steering the AI Ship in B2B

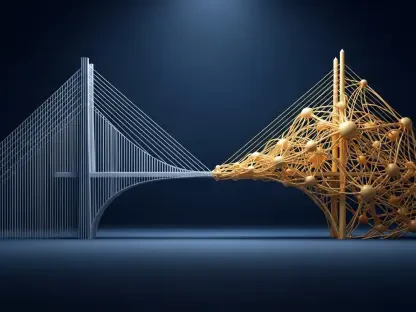

The era of manual bidding and granular keyword control is rapidly receding, replaced by a “black box” that promises efficiency but often delivers uncertainty. For B2B marketers, the transition to Google’s PMax has been met with a mix of curiosity and valid skepticism regarding the loss of visibility. Is it possible to maintain the precision required for complex professional sales cycles while surrendering control to an algorithm? The answer lies not in resisting automation, but in evolving from a manual technician into a strategic pilot who provides the high-fidelity data the machine needs to succeed.

Modern advertising systems operate on the principle of pattern recognition, meaning they are only as effective as the examples provided to them. When a marketer treats the platform as a self-governing entity, they risk allowing the AI to optimize for the path of least resistance, which often leads to superficial engagement. To maintain authority over the campaign, one must define the boundaries of the “black box” through rigorous data inputs. By shifting the focus from individual keyword management to holistic signal integrity, a brand ensures that the automation stays aligned with overarching business objectives rather than just platform-specific metrics.

Why the B2B Landscape Demands a New PMax Approach

In the B2B world, the distance between a initial click and a closed contract is often measured in months, not minutes. Unlike B2C e-commerce, where a conversion is a definitive and immediate purchase, a B2B conversion is frequently just the start of a long-term relationship involving multiple stakeholders. This fundamental difference makes the standard “out-of-the-box” PMax setup risky for business-to-business firms that rely on high-intent interactions. With the sunsetting of “Similar Audiences” and the rise of AI-driven search formats, the traditional reliance on third-party identifiers has vanished.

Success now hinges on how well a brand can translate its complex sales funnel into signals that Google’s AI can actually understand and replicate. Because the algorithm lacks the context of a boardroom negotiation, it requires proxies for intent that go beyond the simple page visit. Without a specialized approach, PMax might prioritize users who are merely researching general topics rather than those ready to engage in a procurement process. Adjusting the campaign structure to account for these nuances is the only way to bridge the gap between high-volume digital traffic and high-value professional interest.

Strategic Inputs: Guiding the Algorithm Toward Precision

To prevent PMax from wandering into irrelevant territory, marketers must use specific levers to refine the campaign’s focus. These tools replace traditional keyword targeting with intent-based signals that act as navigational beacons for the algorithm. Search themes are particularly vital here, as they allow a company to indicate query relevance and bridge the gap between automated placements and specific industry terminology. By providing these thematic anchors, the advertiser helps the machine understand which professional contexts are most likely to yield a qualified prospect.

Furthermore, safeguarding the funnel with brand exclusion lists ensures that the budget is allocated toward finding new prospects rather than paying for clicks from users who were already seeking the brand by name. This prevents the AI from taking credit for existing brand equity and forces it to hunt in competitive or educational spaces. To maintain this level of control, professionals must also utilize account-level channel reporting. This granular visibility allows for the identification of which segments of the Google ecosystem are delivering high-intent professionals versus those providing low-value, high-bounce traffic that drains resources.

Prioritizing Lead Integrity Over Lead Volume

In B2B marketing, a hundred spam leads are worth less than a single Sales Qualified Lead (SQL). Because PMax reaches across the vast Google Display Network and YouTube, it is inherently prone to “bot” traffic and low-quality form fills that can distort performance metrics. The non-negotiable role of Offline Conversion Tracking (OCT) becomes apparent here. By feeding data from a CRM back into Google, a firm trains the AI to find users who eventually sign a contract, not just those who download a white paper. This feedback loop is the primary mechanism for improving lead quality over time.

Beyond data feedback, implementing technical barriers to spam is essential for maintaining a healthy lead pool. Integrating “enhanced conversions for leads” and sophisticated reCAPTCHA systems serves as a necessary filter, ensuring the algorithm learns from genuine human interactions rather than automated scripts. When the system is protected from junk data, the machine learning model can focus its energy on identifying the behavioral patterns of real decision-makers. This shift in focus from quantity to integrity ensures that the marketing team delivers tangible value to the sales department rather than just inflated numbers.

The First-Party Data Revolution in Audience Targeting

With the disappearance of third-party “lookalike” tools, the quality of a PMax campaign is now a direct reflection of a company’s CRM hygiene. Success requires meticulously cleaning and segmenting customer data to provide the AI with the most accurate starting point possible. Providing the algorithm with lists of high-value clients allows it to build more accurate “lookalike” profiles across the Google ecosystem, reaching professionals who share characteristics with the most profitable existing customers. This makes internal data the most valuable asset in the modern advertising toolkit.

Optimizing for revenue events is the logical conclusion of this data-centric strategy. The closer the data point is to actual revenue, the better the AI performs in its predictive modeling. Marketers should prioritize feeding the system data on closed-won deals and high-tier SQLs rather than broad top-of-funnel actions. By consistently updating these lists, a brand ensures that the campaign remains relevant as market conditions and buyer personas evolve. This proactive approach to data management transforms the CRM from a passive record-keeper into a dynamic engine for audience expansion.

Elevating Creative Strategy to a Performance Driver

B2B marketing has historically been visual-averse, often relying on text-heavy assets that prioritize information over engagement. However, as PMax leverages YouTube and the Display Network, creative is no longer just “window dressing”—it is a primary performance lever. The rise of AI-generated assets has allowed even smaller B2B teams to produce video and image content that was previously cost-prohibitive. These assets act as the first filter for an audience, signaling the professionalism and relevance of the brand before a user even clicks on the ad.

Scientific creative testing is the final step in moving from subjective opinions to data-driven choices. The use of PMax A/B testing allows marketers to identify which visuals act as a “filter” to attract the right professional audience while deterring those who are not a fit. For instance, a video that highlights a specific technical solution might attract an engineer while a broader value-proposition image might resonate with a C-suite executive. Systematically testing these variations ensures that the creative strategy supports the broader goal of lead quality, turning every impression into a learning opportunity for the brand.

Transparency and Advanced Reporting Frameworks

The “black box” of PMax became significantly more transparent once marketers learned where to find the necessary insights. Advanced reporting was the only way to verify if the automation acted in the brand’s best interest. Search term insights provided the clarity needed for messaging alignment, while regular audits of queries allowed for the refinement of account-level negative keywords. These steps ensured that the ads resonated with the current market rather than outdated trends. Such visibility allowed teams to pivot their strategies based on real-world performance rather than theoretical projections.

Auction insights and competitive benchmarking also played a pivotal role in maintaining a dominant market position. By monitoring how a company stacked up against rivals in terms of impression share, marketers secured their brand’s status as a top choice in their respective categories. Asset-level performance audits further refined this process by identifying low-performing headlines or images for systematic replacement. Ultimately, the integration of these reporting frameworks allowed firms to treat AI as a partner that required constant feedback, ensuring that every dollar spent contributed to long-term revenue growth and sustainable market authority.