Buyer decisions are increasingly forged inside AI answers long before a click occurs, and the sources that AIs cite decide who collects revenue when intent peaks. As AI Overviews, ChatGPT, Claude, Perplexity, and a wave of vertical assistants shape final-mile choices, a quiet redistribution of authority has taken hold: structured, verifiable facts now outrun legacy brand equity, and agile publishers are taking the citations that convert. This shift has raised an uncomfortable question for large enterprises with dominant domains and big budgets—are slow, narrative-heavy processes silently taxing AI visibility and starving pipeline?

Industry Overview

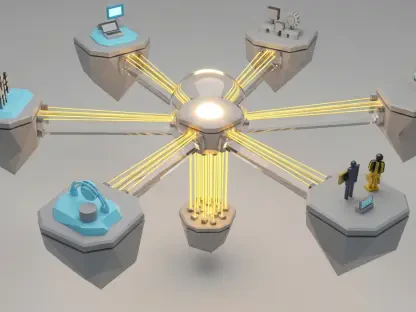

A new search surface is setting the rules. Instead of ten blue links and long dwell times, buyers now scan synthesized answers that assemble data, compare options, and cite a handful of sources. In that environment, machine-readable assets—schema-rich tables, JSON-LD datasets, price indices, uptime logs, SLAs, and compatibility charts—become the primary currency of authority. The old playbook of persuasive essays and glossy landing pages travels poorly into systems that privilege verifiable inputs over adjectives.

Moreover, the stakes have moved down the funnel. Placement in AI answers is not just an awareness metric; it often determines who gets contacted, trialed, or procured. Payments, logistics, SaaS, fintech, and healthcare show the sharpest effects because prices, policies, outages, and regulations can change week to week. When models reconcile fresh facts, early sources can become anchors of consensus, and latecomers must pay to dislodge them—if they can at all.

AI Discovery Is Rewriting Authority: How Citations Flow in an LLM-First Market

Incumbents still bring domain trust and backlinks, yet agile publishers with data-first models are outperforming them at the exact moment of decision. The reason is technical as much as editorial: structured inputs—Dataset, Product, SoftwareApplication, and ItemList schemas; clean HTML tables; authenticated feeds; and simple APIs—are easier for models to cross-verify. Claims that cannot be matched to numbers rarely survive into a compact summary.

Regulatory pressure also shapes what gets cited. Advertising standards, sector rules for finance and healthcare, and privacy expectations push content teams toward scoped facts with clear provenance. Ironically, that favors those who operationalize compliance from the start. Legal teams tend to approve precise metrics faster than sweeping superlatives, which flips the advantage toward organizations that can publish facts without rewriting code or re-litigating tone.

What’s Driving the AI Citation Shake-Up—and Who’s Benefiting

From Narratives to Numbers: Trends That Favor Machine-Readable Facts

AI systems privilege speed and verification. When pricing, tariffs, or policies move, recency can outrank reputation for a time because consensus has not set. Smaller teams exploit that window by shipping matrices and spec tables that models can triangulate across sources. By contrast, narrative efforts trigger elongated review cycles as stakeholders debate assertions that cannot be pinned to a dataset.

This is where GEO becomes a data operation. Decoupling facts from storytelling lets teams ship approved fields through sanctioned templates while long-form copy catches up. In payments, a blog post claiming to be “the most secure” stalls; a transaction fee and API uptime matrix ships next week and wins the citation for “compare enterprise payment gateway fees.” In logistics, thought leadership lags while a freight delay and tariff matrix becomes the cited backbone of the answer.

By the Numbers: Citation Share, Speed Windows, and ROI Forecasts

The measurable spread is striking. Publishers that release structured updates within 14 days of a market shift have seen an average 32% uplift in AI citation share across ChatGPT-4, Perplexity, and AI Overviews. Enterprises often take about 180 days to publish comparable assets, by which point the initial consensus has hardened. Recovery then tends to require roughly nine months and around $120,000 in defensive paid media, with diminishing returns as models keep preferring the earlier, structured sources.

KPI discipline is vital. Teams that track AI Overview and LLM visibility as distinct from classic SERP rankings see the divergence sooner and can act on it. Time-to-publish for factual assets becomes as important as rank tracking once was; the threshold that correlates with outperformance is the sub-14-day window when a topic is unsettled.

The Bureaucracy Tax Inside the Enterprise—and How to Cut It

The bureaucracy tax is the cost of slow, cross-functional processes that postpone data assets AI systems want. It accrues through multi-step approvals, narrative rewrites, IT release queues, and legacy CMS constraints. The commercial effect is simple: miss the speed window and a rival sets the reference point the models will cite for months.

The remedy is both technical and operational. Schema-locked, data-first templates restrict inputs to pre-approved fields and auto-generate JSON-LD alongside clean tables. Legal sign-off accelerates because the team is reviewing scoped values, not varied prose. An AI-readiness pod—a technical SEO lead, about 10% developer capacity, and a compliance liaison for data validation—runs this fast lane so factual updates no longer wait for broad releases or brand edits.

Process redesign finishes the job. Facts are routed through pre-approved matrices on short SLAs, while narratives follow the usual brand cadence. Design variability is constrained to prevent code drift, and shadow IT is blocked to preserve security. The mandate that changes the curve is clear: material market shifts must pass from verified source to published schema within two weeks.

Compliance, Claims, and the New Rules for Publishable Facts

Rules do not vanish in an AI-first world; they become the blueprint. Claims substantiation, sector-specific mandates, and ad disclosures require that every published number trace to a source. Programs that pre-approve fields, thresholds, and update cadences make compliance an accelerator rather than a brake. Security reviews harden the template once, then change control governs inputs without reopening architecture.

The practical outcome is fewer disputes over adjectives and faster legal cycles. Audit trails for each field, scoped metrics with ranges, and disciplined sourcing make approvals predictable. Teams still tell stories, but time-sensitive acquisition work rides on verified data that sails through governance and lands where models look first.

Where AI-First Visibility Is Headed Next

The technical substrate is getting richer. Expanded use of Dataset, Product, SoftwareApplication, and ItemList schemas, along with authenticated feeds and real-time hooks, will deepen how models reconcile facts. Vertical LLMs, agentic retrieval, and enterprise knowledge APIs are already biasing toward structured evidence they can refresh and reason over.

Buyer behavior is shifting in parallel. People gravitate toward concise, comparative, and verified answers that let them decide in a screen or two. Growth will concentrate in assets that mirror how decisions get made: specs and pricing indices, SLA and uptime records, compliance matrices, regional tariffs and taxes, and compatibility charts. Advantage will favor teams that can maintain those datasets with operational rigor while navigating regulation at speed.

What To Do Now—Closing the Gap and Reclaiming AI Citation Share

The findings point in one direction: agility beats legacy authority when consensus is forming, and the bureaucracy tax is avoidable with structural changes. Legal functions move faster when inputs are factual and schema-locked, so design systems that supply exactly that. The discipline to publish within the speed window decides who gets cited when intent spikes.

The immediate playbook is concrete. Map approval queues and quantify where days are lost; decouple facts from prose and standardize indices and matrices; fund a single, sanctioned sprint to build the schema-locked GEO template with embedded JSON-LD and strict field controls; stand up the AI-readiness pod to operate the fast lane; and monitor LLM and AI Overview placement as distinct metrics. Hitting the sub-14-day threshold on meaningful updates is the lever that consistently correlates with share gains.

Conclusion

This report showed that structured, rapidly published facts had displaced narrative authority in AI-led discovery, and that the cost of delay accumulated into measurable losses in citation share, demand capture, and media spend. Enterprises that quantified their bureaucracy tax, sanctioned a schema-locked template, and staffed an AI-readiness pod regained ground by turning compliance into a speed advantage. The next step lay in treating GEO as a continuous data operation—instrumented SLAs, real-time feeds where feasible, and a focus on the matrices that AIs use to settle consensus. Teams that adopted those mechanics had protected pipeline when markets shifted, while those that deferred change faced longer, pricier climbs against entrenched citations.