The rapid transformation of static portraiture into fluid, conversational digital entities has effectively dismantled the traditional barriers of cost and logistical complexity inherent in high-end video production. What was once a specialized task requiring a green screen, a professional crew, and hours of painstaking manual animation is now achievable through a single browser tab and a few megabytes of data. This technological leap marks a definitive transition in the media landscape, moving away from captured reality toward a new era of synthetic performance. At its core, the AI talking photo generator is not just an editing tool but a sophisticated engine of digital reconstruction that synthesizes human expression from the latent space of trained neural networks. This review explores the current state of this technology, analyzing the intricate mechanics that make these digital avatars indistinguishable from their biological counterparts while assessing the broader implications for global communication.

The Paradigm Shift: Understanding AI Talking Photo Technology

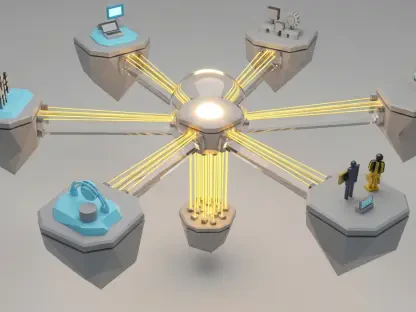

The fundamental shift in digital media is characterized by the migration from 2D image manipulation to full-scale 3D anatomical simulation driven by deep learning models. These systems utilize sophisticated neural networks to analyze the geometry of a human face, identifying specific landmarks that define how a particular individual moves and speaks. Unlike early animation attempts that merely stretched or warped pixels, modern generators construct a dynamic mesh over the static image. This process allows the software to predict how light would hit the skin during a jaw movement or how shadows would shift as the subject tilts their head. Such an advancement has democratized professional-grade video production, allowing individuals and organizations to produce high-fidelity content without the prerequisite of technical expertise or expensive hardware.

Emerging as a strategic solution to the high costs and labor-intensive nature of traditional videography, these generators serve as a bridge between static branding and interactive media. The technology operates on the principle of generative adversarial networks and diffusion models, which have been refined to prioritize temporal consistency. This means that each frame of the generated video is logically connected to the one before it, eliminating the jitter and visual noise that once plagued synthetic media. In the broader technological landscape, the ability to generate a human performance from a single photograph represents the ultimate optimization of the content supply chain. By reducing the reliance on physical proximity and human availability, companies can now maintain a consistent presence across various digital platforms with unprecedented speed.

Core Components and Technical Performance: Anatomical Rigging

At the heart of the most successful talking photo generators lies the concept of digital rigging, a process that converts a flat portrait into a three-dimensional entity. This feature involves the sophisticated analysis of anatomical landmarks such as the jawline, eye sockets, and brow ridges. By establishing these points of reference, the AI can simulate the complex interplay of facial muscles required for natural speech. The performance of modern generators ensures that the subject maintains a sense of depth and rotation, effectively avoiding the “flat cutout” aesthetic that characterized early attempts at image animation. This depth is critical for maintaining viewer immersion, as it allows the avatar to engage in naturalistic movements like nodding or glancing away from the camera, which are essential components of non-verbal communication.

The evolution of this rigging technology has moved toward a more holistic understanding of human physiology rather than just surface-level mimicry. For instance, current models now account for the movement of the neck and shoulders in relation to head turns, ensuring that the entire upper torso behaves in a synchronized manner. This level of detail is necessary to satisfy the human eye’s keen ability to detect unnatural movement. When the rigging is executed correctly, the resulting video feels anchored in reality, providing a stable foundation for the more complex tasks of lip-syncing and expression synthesis. Moreover, the transition between different poses is handled through advanced interpolation techniques, which ensure that even rapid movements remain smooth and free of technical artifacts.

Precision Lip-Syncing and Micro-Expression Synthesis

The success of any talking photo generator is largely judged by its ability to achieve psychological realism through precise lip-syncing. This involves aligning visual visemes—the physical shapes the mouth makes—with vocal phonemes, which are the distinct units of sound in a language. By leveraging deep learning models trained on massive datasets of human speech, modern systems can predict the exact mouth shape required for any given sound with millisecond accuracy. This precision is what allows the technology to overcome the “uncanny valley,” a psychological phenomenon where near-human representations cause a sense of unease in viewers. When the mouth movements are perfectly synchronized with the audio, the viewer’s brain accepts the synthetic persona as a legitimate communicator.

Beyond the basic mechanics of speech, the integration of micro-expressions is what truly brings a digital avatar to life. These subtle movements, such as the slight narrowing of the eyes, the raising of a brow for emphasis, or the natural rhythm of blinking, are critical for conveying emotion and intent. Sophisticated generators now use “emotion-aware” algorithms that can interpret the tone of the input script and adjust the facial expressions accordingly. If a script is enthusiastic, the AI may increase the frequency of head nods and widen the eyes; if the tone is somber, the movements become more restrained. This layer of synthesis transforms a simple animation into a performance, making the technology an effective tool for storytelling and persuasive communication.

Integrated Voice Synthesis and Multilingual Globalization

The utility of a talking photo is significantly enhanced by the integration of high-quality Text-to-Speech engines and voice cloning capabilities. These systems no longer rely on the robotic, monotone voices of the past; instead, they deliver messages with human-like prosody, intonation, and emotional resonance. Voice cloning, in particular, allows users to record a short sample of their own voice, which the AI then uses to generate a digital vocal profile. This profile can then read any script while maintaining the unique timbre and accent of the original speaker. This capability ensures that the digital twin sounds as authentic as it looks, providing a cohesive and believable experience for the audience.

Furthermore, the ability to instantly translate scripts into dozens of languages while automatically adjusting the lip-syncing allows for a truly “global-first” content strategy. A single video can be rendered in English, Spanish, Mandarin, and Arabic, with the avatar appearing to speak each language fluently. This is a revolutionary development for international organizations that need to deliver consistent messaging across diverse geographic regions. The AI handles the heavy lifting of translation and synchronization, ensuring that the cultural and linguistic nuances of the target audience are respected. This global reach is achieved without the need for multiple actors or expensive dubbing sessions, making high-fidelity communication accessible on a worldwide scale.

Market Innovations and Industry Trends: Frictionless Creativity

Current developments in the sector are focusing heavily on “prompt-based” gesture systems, which allow creators to influence the mood and body language of an avatar through simple text commands. Instead of manually adjusting parameters, a user can simply type “speak with confidence” or “appear thoughtful,” and the AI will adjust the avatar’s posture, speed of speech, and facial expressions to match the request. This innovation represents a shift toward a more intuitive form of content creation where the human provides the creative direction and the AI handles the technical execution. This “frictionless creativity” is a major trend, as it enables the rapid production of content that still feels personalized and intentional.

There is also a significant move toward “no-code” interfaces that facilitate parallel production workflows. Leading platforms are designing their tools to be accessible to marketing managers, teachers, and small business owners who may not have any background in video editing. The focus is on speed and scalability, allowing a single user to generate dozens of personalized videos in the time it would take to film a single traditional take. Platforms like Zoice and HeyGen are pushing the boundaries of identity preservation, ensuring that a digital twin remains visually consistent across thousands of different iterations. This consistency is vital for brand building, as it allows a company to have a recognizable digital spokesperson that never tires and is always available to deliver a message.

Real-World Applications and Sector Deployment

In the realms of marketing and sales, AI talking photo generators have become indispensable for creating “dynamic advertising.” Businesses are increasingly using these tools to craft personalized video outreach messages that address individual clients by name and reference their specific needs. This level of tailoring has led to significant increases in engagement and conversion rates, as viewers are much more likely to respond to a face-based message than a generic text email. The ability to produce these videos at scale means that even large corporations can offer a “personal touch” to every customer in their database, bridging the gap between automated efficiency and human connection.

Corporate training and e-learning have also seen a massive transformation through the deployment of these tools. Organizations like Synthesia enable the mass production of onboarding materials and educational modules that can be updated instantly as company policies change. Instead of re-filming an entire training series, a coordinator can simply update the text script and regenerate the video. This not only saves an immense amount of money but also ensures that employees always have access to the most current information. For educators, the technology allows for the creation of interactive lessons that keep students engaged through visual and auditory stimulation, turning dry academic text into a more immersive learning experience.

Content creators and social media influencers are leveraging talking photos to maintain high posting frequencies across platforms like TikTok, Instagram, and YouTube. For many creators, the primary bottleneck is the physical act of filming; by using an AI avatar, they can continue to publish content even when they are traveling or busy with other projects. This allows them to maintain their algorithmic relevance without the risk of burnout. Additionally, the technology allows for the creation of “faceless” channels where the creator can remain anonymous while still providing a human element to their videos. This flexibility has opened up new creative possibilities for those who may be camera-shy but have valuable information or stories to share with the world.

Challenges, Regulatory Hurdles, and Limitations

Despite the remarkable advancements, the technology still faces several hurdles, most notably the persistent issue of the “uncanny valley.” While top-tier generators are very convincing, some tools still struggle with technical artifacts such as flickering or unnatural facial distortions, particularly during complex movements or when the subject is viewed from an extreme angle. These distortions can break the illusion of reality and distract the viewer from the intended message. Furthermore, high-resolution output requires significant cloud computing power, which often translates into credit-based costs that can be prohibitive for independent creators or small non-profits. The hardware barrier remains a reality, as the most sophisticated models require the latest GPU clusters to render video in a reasonable timeframe.

Ethical and transparency concerns are perhaps the most significant challenge facing the industry. The rise of synthetic media has sparked a global conversation about deepfakes and the potential for misinformation. If a realistic video of a person can be created from a single photo and a fake audio clip, the potential for identity theft and the spread of false narratives is substantial. To combat this, many platforms are implementing strict usage policies and “watermarking” their videos to indicate they were generated by AI. There is a growing call for regulatory frameworks that mandate the disclosure of AI-involved content to maintain public trust. Balancing the creative potential of the technology with the need for security and authenticity remains a complex task for developers and policymakers alike.

Furthermore, there are concerns regarding the ownership of digital likenesses. As it becomes easier to animate any photo, the question of who owns the rights to a synthetic performance becomes increasingly blurred. If a company uses a stock photo to create a successful brand ambassador, does the original model have a claim to a share of the profits? These legal questions are currently being debated in courts around the world, and the outcomes will likely shape the future of the industry. For now, users must navigate a landscape of evolving terms of service and copyright laws, making it essential for organizations to be diligent about the provenance of the images and voices they use in their AI-generated content.

Future Outlook and Breakthrough Potential

Looking ahead, the next frontier for AI talking photo generators is the introduction of even more nuanced emotional intelligence. Future developments are expected to allow avatars to react dynamically to user input in real-time, making them suitable for live customer service or interactive virtual assistants. Imagine a digital concierge that not only answers questions but also reads the user’s frustration or satisfaction through their voice and adjusts its own demeanor to compensate. This level of interactivity would represent a massive leap forward in how humans interact with machines, moving closer to the seamless integration of AI into daily life.

Potential breakthroughs in localized processing may also move these generators from cloud-only environments to high-speed on-device execution. As mobile processors become more powerful, it is likely that high-quality talking photo generation will be possible directly on a smartphone without the need for an internet connection. This would allow for even greater privacy and lower costs, as users would not have to pay for server time. Such a move would also enable real-time applications in augmented and virtual reality, where digital avatars could inhabit the physical space around us, providing information and entertainment in a truly immersive way.

In the long term, AI talking photo generators will likely become a cornerstone of the global media economy, blurring the lines between synthetic and captured reality. We may see a world where the majority of video content is generated or enhanced by AI, with “pure” videography becoming a niche artistic choice. This shift will require a fundamental reassessment of how we consume and verify information. However, the potential for positive impact—such as making education more accessible, helping businesses grow, and allowing for new forms of creative expression—is immense. The journey from a static photo to a living, breathing digital persona is just the beginning of a larger transformation in human communication.

Summary of the Technological Assessment

The transition of AI talking photo generators from a digital novelty to a piece of essential media infrastructure was both swift and inevitable. This review highlighted that the technology now offers a revolutionary balance of cost-efficiency, scalability, and high-fidelity output that traditional studios simply cannot match. The ability to rig a 2D image for 3D movement and synchronize it with multilingual voice synthesis has fundamentally changed the rules of content creation. While the industry must still navigate the technical challenges of the uncanny valley and the ethical complexities of synthetic media, the value proposition for marketers, educators, and creators is undeniable. These tools have proven that the digital persona is a versatile and powerful asset in a world that demands constant, high-quality communication.

Organizations and individuals who adopted these tools early gained a significant competitive advantage by being able to produce personalized, engaging content at a fraction of the traditional cost. The assessment showed that platforms like Zoice and HeyGen have set a high bar for realism and user experience, making the technology accessible to a non-technical audience. As the underlying models continue to improve, the gap between synthetic and real video will continue to shrink, eventually becoming imperceptible to the average viewer. This democratization of video production is perhaps the most significant outcome of the technology, as it empowers anyone with a story or a product to share it with a global audience in a compelling, visual format.

The move toward automated creativity did not replace the need for human ingenuity; rather, it provided a more powerful set of brushes for the modern artist and communicator. By automating the mechanical aspects of video production, these generators allowed creators to focus on the “what” and “why” of their message rather than the “how.” The future of the media economy is clearly intertwined with the advancement of these AI systems, and the progress made thus far suggests a trajectory toward even more integrated and intelligent digital experiences. As we move forward, the focus will likely shift from achieving basic realism to exploring the vast creative possibilities that synthetic media enables, paving the way for a new era of human-AI collaboration.

The definitive verdict on AI talking photo generators is that they have successfully bridged the gap between static information and dynamic engagement. They provided a solution to the scalability crisis of modern media, allowing for a volume of content that was previously unimaginable. While regulatory and ethical discussions must keep pace with these advancements, the technological foundation is solid and continues to expand. The impact on education, marketing, and the broader media sector was transformative, signaling a permanent change in the digital landscape. For anyone looking to stay relevant in an increasingly visual and automated world, understanding and utilizing these tools became a necessity rather than an option.

Strategic implementation of these technologies required a careful balance of creative vision and technical awareness. The most successful users were those who understood the limitations of the current models and worked within those bounds to create authentic experiences. For instance, prioritizing high-quality input images and well-crafted scripts ensured that the resulting videos maintained a professional standard. As the technology moved into its next phase, the focus on emotional intelligence and real-time interaction promised to further deepen the connection between digital avatars and their human audiences. This evolution ensured that the talking photo generator remained at the cutting edge of technological innovation.

In the final analysis, the development of these generators was a testament to the power of deep learning to solve complex human problems. By translating the nuances of human expression into code, developers created a tool that extended our ability to communicate across time, space, and language. The journey from a simple animated GIF to a fully realized digital twin was a remarkable achievement in engineering and design. As these systems become more refined and widely available, they will continue to redefine what it means to be a “creator” in the twenty-first century, offering a glimpse into a future where the digital and physical worlds are more closely aligned than ever before.

The global media infrastructure was forever altered by the arrival of these tools, which offered a glimpse into a future where content is as fluid as thought. The efficiency gains recorded by companies across various sectors demonstrated that the synthetic persona was not just a fad but a fundamental evolution in how we present ourselves to the world. As we moved toward a more automated economy, the ability to generate human-centric media on demand became a key driver of growth and innovation. The talking photo generator stood as a symbol of this progress, a tool that turned the silent pixels of the past into the eloquent voices of the future.

The integration of these systems into daily workflows also prompted a reevaluation of our relationship with digital identity. As individuals began to curate their digital twins with the same care they used for their physical appearance, the concept of the “persona” took on a new, more tangible meaning. This shift encouraged a deeper exploration of how we represent ourselves in virtual spaces, leading to more diverse and creative forms of self-expression. The technology did more than just animate photos; it provided a platform for a new kind of digital existence that was limited only by the imagination of the user. This legacy of empowerment and innovation remained the defining characteristic of the AI talking photo generator.

As the industry matured, the focus shifted toward ensuring that these powerful tools were used responsibly and for the benefit of society. The collaboration between tech companies, ethicists, and lawmakers became a crucial part of the development process, ensuring that the technology’s potential for good was maximized while its risks were mitigated. This proactive approach helped to build the public trust necessary for the widespread adoption of synthetic media. The result was a robust and vibrant ecosystem where AI and human creativity flourished side by side, creating a media landscape that was more inclusive, accessible, and dynamic than at any point in history.