Marketing departments often prioritize the granular collection of user data above all else, yet this pursuit frequently creates a invisible barrier to organic search success. Internal tracking parameters, such as UTM codes or session IDs embedded in site navigation, trigger a fundamental conflict between analytics precision and technical search engine performance. This guide serves as a comprehensive resource for digital strategists and developers looking to reconcile these competing needs. By following the steps outlined below, organizations can eliminate redundant query strings, resolve URL bloat, and transition toward a more sophisticated tracking architecture that preserves both data integrity and high-ranking search visibility.

The Hidden Conflict Between Marketing Data and Search Engine Performance

The core of the problem lies in how tracking parameters create a sprawling, unmanageable site architecture that confuses both users and machines. While a marketer sees a way to measure click-through rates on a homepage banner, a search engine crawler sees an entirely new URL that requires indexing. This redundant navigation leads to a phenomenon known as URL bloat, where a single piece of content exists under dozens of different addresses. Consequently, the site’s ability to satisfy user expectations and maintain high rankings begins to degrade under the weight of its own technical debt.

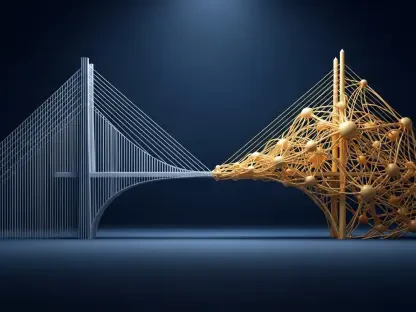

As the digital landscape becomes increasingly dominated by AI-driven discovery and rapid-response search algorithms, structural simplicity has become a non-negotiable requirement. High-performing websites must balance the need for insights with the technical necessity of a clean, efficient infrastructure. The shift from legacy URL-based tracking toward modern, DOM-based methods is not merely a technical preference; it is a vital step in ensuring that search engines and AI agents can accurately identify the authoritative source of your content without navigating a maze of redundant query strings.

Why URL Hygiene Dictates Modern Search Visibility

In the early days of the web, query parameters were a standard way to monitor user journeys, but search engine algorithms have evolved to prioritize structural simplicity. Today, a website’s crawl efficacy is a primary ranking factor, meaning search engines must be able to discover and prioritize valuable content without wasting resources on thousands of identical URL variations. When marketing departments prioritize internal UTMs over technical hygiene, they inadvertently bury their most important pages, making it difficult for automated agents to determine which version of a page is the correct one to serve to users.

A disorganized URL structure sends conflicting signals to search engines about which content is relevant and trustworthy. Clean URL hygiene ensures that every visit from a crawler is focused on high-value, unique content rather than being trapped in a loop of tracked variations. Furthermore, a streamlined architecture improves the predictability of a site, allowing search engines to map the content hierarchy more effectively. This clarity is essential for maintaining visibility in an era where speed and precision are the hallmarks of successful digital platforms.

Analyzing Technical Consequences and Modern Implementation Solutions

Step 1: Evaluating the Impact of URL Bloat on Crawl Efficacy

Understanding the Difference Between Discovery and Meaningful Crawling

Search engines treat every unique query string as a separate page, which forces crawlers to expend significant energy processing identical content across various tracked links. While discovery is the initial act of finding a URL, meaningful crawling is the deep analysis of content that contributes to ranking power. When a crawler is bogged down by parameters, it fails to reach the deep layers of a site where new or updated information might reside.

Identifying Diluted Crawl Demand and Extended Navigation Paths

When parameters proliferate, high-value money pages lose priority in the crawl queue because search engine resources are spread thin across redundant variants. These extended navigation paths act as obstacles, forcing crawlers to take multiple hops before reaching the actual canonical content. Over time, this dilution results in slower index updates and a significant drop in the frequency with which a search engine visits the core sections of a website.

Step 2: Recognizing the Limitations of Reactive Canonicalization

Why Rel=”Canonical” is an Indexing Band-Aid, Not a Discovery Cure

Many developers rely on canonical tags as a primary defense against duplicate content, but these tags function only after a search engine has already spent resources processing the redundant URL. Canonicalization is a reactive tool that instructs the indexer on how to group pages, but it does not prevent the crawler from visiting those pages in the first place. Therefore, it solves the problem of duplicate display in search results but fails to fix the underlying issue of crawl budget waste.

Troubleshooting Common Search Console Indexing Errors

Reliance on canonicals often leads to frustrating statuses in search consoles, such as “Discovered – currently not indexed,” which signals that the site architecture is confusing the crawler. These errors suggest that the search engine has identified the page but deemed it too redundant or low-value to include in the final index. By removing the tracking parameters at the source, webmasters can clear these errors and ensure that the most important pages receive the attention they deserve.

Step 3: Assessing the Hazards of Data Integrity and Session Fragmentation

Preventing Session Breakage in Analytics Platforms

Internal tracking codes often trigger a reset in analytics tools, essentially stripping the original organic search attribution from the conversion path. When a user clicks an internal link with a UTM code, the software may interpret this as a new session starting from that link rather than a continuation of the initial visit. This breakage makes it nearly impossible to determine the true ROI of organic traffic, as the conversion is attributed to the internal campaign rather than the search entry.

Eliminating Reporting Noise for Accurate ROI Measurement

By removing parameters, marketers can avoid fragmented engagement metrics and see a unified, accurate view of how each page performs. Unified data allows for better decision-making, as it provides a clear picture of the user journey without the interference of artificial session breaks. This clarity ensures that marketing budgets are allocated based on real-world performance rather than skewed data points caused by technical tracking errors.

Step 4: Mitigating Backlink Fragmentation and Performance Lag

Consolidating Link Equity and Ranking Power

Clean URLs ensure that when users share links externally, the resulting link equity flows directly to the canonical page rather than a tracked variant. When a user copies a URL with an internal tracking parameter and posts it on a forum or social media site, any ranking power generated by that backlink is split between the tracking code and the actual content. Consolidating these signals into a single, clean URL maximizes the authority of each page and helps it rank higher.

Improving Cache Hit Rates and Time to First Byte

Unique parameters often bypass Content Delivery Network (CDN) caches, forcing the server to generate a entirely new response and slowing down the user experience. Because the CDN views each parameterized URL as a unique request, it cannot serve the page from its global edge locations, resulting in higher latency. Standardizing URLs maximizes caching potential, which reduces server load and ensures that users receive content as quickly as possible, regardless of their navigation path.

Step 5: Transitioning to DOM-Based Tracking for a Clean Architecture

Implementing Custom Data Attributes for Interaction Monitoring

Using data tags within the HTML allows marketing teams to track clicks and interactions without altering the URL structure at all. By embedding information directly into the elements of a page, such as buttons or links, you can capture specific campaign details through scripts. This approach keeps the user’s browser address bar clean while still providing the granular data that analytics teams require for their reports.

Configuring Tag Managers for Non-Destructive Event Tracking

Setting up listeners in a tag management system enables the capture of all necessary campaign data while keeping internal links canonical and SEO-friendly. This method involves creating triggers that respond to the custom data attributes mentioned in the previous step, sending the information directly to the analytics platform. This transition ensures that the technical SEO foundation remains solid while the marketing team maintains its ability to monitor complex user behavior across the site.

Key Findings for Maintaining a High-Performance Site Structure

- Crawl Efficiency: Removing parameters allows search engines to focus on unique, high-value content without the distraction of redundant URLs.

- Data Accuracy: Eliminating internal UTMs prevents session resets and preserves original attribution sources for more reliable reporting.

- Link Authority: Clean links prevent the dilution of external ranking signals across multiple variations, strengthening the site’s overall authority.

- Site Speed: Standardizing URLs maximizes caching potential across CDNs, significantly reducing server load and improving page load times.

- AI Readiness: Simplified architectures are easier for AI crawlers and Large Language Models to index accurately, ensuring future-proof visibility.

The Future of AI Retrieval and Infrastructure-Level Solutions

As the web moves toward an AI-driven landscape, the cost of URL noise is becoming prohibitive for many organizations. AI agents and Retrieval-Augmented Generation (RAG) systems operate under strict efficiency constraints and are highly sensitive to duplicate data. To combat these issues, the industry has seen the rise of the No-Vary-Search HTTP header, which allows developers to tell browsers and crawlers to ignore specific parameters at the infrastructure level. This trend suggests that the future of the web belongs to sites that prioritize a clean-first architecture, ensuring content is as accessible to machines as it is to humans.

Securing Your Digital Growth Through Technical Excellence

The practice of using tracking parameters in internal links was an outdated methodology that undermined the foundations of technical SEO and data accuracy. Organizations that successfully shifted to DOM-based tracking and standardized data attributes have realized significant gains in both crawl frequency and attribution clarity. Moving forward, the focus must remain on implementing the No-Vary-Search header and similar infrastructure-level controls to prevent the re-emergence of URL bloat. These actions did more than just clean up the site; they established a resilient framework capable of supporting the next generation of AI-led search interactions. Prioritizing technical hygiene ensured that every piece of content remained discoverable, authoritative, and ready for a more efficient web.