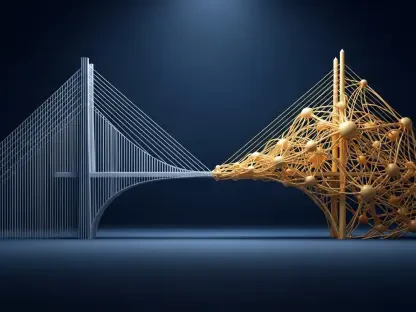

The intersection of ground-truth data and market intelligence represents the new frontier of search strategy. By integrating first-party insights from Google Search Console and Google Analytics with the expansive competitive landscape provided by Semrush, SEO professionals can move beyond generic recommendations toward high-precision execution. This approach, powered by the Model Context Protocol (MCP) and AI-driven analysis, allows for a unified view of performance that balances what is happening on a site with why it is happening within the broader market.

The following discussion explores the methodologies for reconciling these data sets, identifying high-impact “striking distance” opportunities, and leveraging real-time AI interactions to safeguard organic visibility against algorithm shifts and competitive pressure.

Combining ground-truth data from Search Console with market intelligence like keyword difficulty reveals insights neither tool offers alone. How do you reconcile discrepancies between these data sets, and what specific metrics do you prioritize when building a unified performance view for a new site?

Reconciling these data sets requires treating Google Search Console as the “ground truth” for actual impressions and positions, while using Semrush as the lens for competitive context. When discrepancies arise—such as a tool estimating 1,000 visits while GSC shows only 200—I lean on GSC for volume but look to market intelligence to explain the gap, often finding that the keyword difficulty (KD) is higher than anticipated or that SERP features are cannibalizing clicks. For a new site, I prioritize a “Unified Visibility Score” that combines GSC impressions with Semrush KD and search volume. I specifically look for queries where the site maintains a position between 5 and 15 with over 500 impressions, then filter for a KD below 35% to identify the path of least resistance. This step-by-step alignment ensures we aren’t chasing high-volume “ghost” keywords that the site has no actual footprint for, focusing instead on real momentum backed by competitive viability.

Keywords ranking in the five-to-twenty range often represent the fastest growth opportunities if their difficulty is low. What specific technical or on-page adjustments do you implement to push these terms into the top three, and how do you calculate the potential click-through rate uplift for stakeholders?

To push these “striking distance” keywords into the top three, I first audit the page for topical depth, often adding semantically related subheadings discovered through competitive gap analysis. Technically, I ensure the primary keyword is supported by internal links from higher-authority pages, a move that often provides the necessary “crawl equity” to jump from page two to page one. To calculate the potential uplift for stakeholders, I use the current GSC average position and CTR data as a baseline and then model the traffic increase if the term hit position 3, which typically sees a significantly higher share of clicks. This calculation involves taking the current impressions, multiplying them by the industry-standard CTR for the third position, and subtracting current clicks to show a concrete “monthly click uplift” figure. Presenting a prioritized list where keywords are ranked by this potential uplift multiplied by the inverse of their KD makes a compelling business case for immediate optimization.

Modern SEO workflows now allow AI models to interact directly with live databases through standardized protocols. Beyond simple data fetching, how does this real-time interaction change your ability to troubleshoot sudden ranking drops, and what are the risks of relying on AI-generated visualizations?

Real-time interaction via protocols like MCP transforms troubleshooting from a manual forensic exercise into a conversational diagnostic. If a site experiences a sudden drop, I can immediately ask the AI to cross-reference GA4 engagement metrics with GSC ranking shifts and Semrush backlink data to see if the drop is isolated to “thin content” pages with fewer than five ranking keywords. However, the risk of relying on AI-generated visualizations is that LLMs can sometimes misread underlying JSON data or create charts that look authoritative but misattribute channel data. I once saw a dashboard that suggested a massive organic spike, but upon closer inspection of the raw JSON files, the AI had conflated “Direct” traffic with “Organic Search” due to a labeling error in the fetcher script. It is essential to perform a sanity check by comparing at least three data points in the visualization against the raw tool output before presenting findings to a client.

Some high-traffic pages rank for very few keywords, making them vulnerable to algorithm shifts. How do you identify these fragile pages using engagement metrics, and what is your process for expanding their topical coverage without hurting current performance?

I identify these “fragile” pages by pulling the top 20 blog pages by sessions from GA4 and cross-referencing them with Semrush to calculate the ratio of sessions to ranking keywords. A page with 5,000 monthly sessions but only three ranking keywords is a red flag, as it relies too heavily on a narrow intent that a single algorithm update could wipe out. To expand topical coverage, I identify related clusters where competitors rank but our page is invisible, then integrate those topics into the existing content as new sections or FAQ modules. I monitor the “before and after” GSC click data for the original primary keywords to ensure that broadening the scope doesn’t dilute the page’s relevance for its existing high-performers. This process effectively builds a “moat” around the high-traffic page by diversifying the keyword portfolio that supports its visibility.

Many brands waste budget by bidding on search terms where they already dominate organic results. What criteria do you use to decide when to pause a high-performing paid campaign, and how do you monitor the impact on total traffic once the ads are turned off?

The primary criterion for pausing a paid campaign is “Organic Dominance,” which I define as holding a top-3 organic position for a keyword that also has high ad spend and a high CPC. By cross-referencing Google Ads search term data with Semrush organic rankings, I can pinpoint exactly where we are paying for clicks we would likely earn for free. After pausing these ads, I monitor “Total Search Clicks” (Paid + Organic) over a 30-day period to ensure the organic listing is capturing the lion’s share of the former paid traffic without a significant net loss. If the total traffic remains stable while the ad spend drops to zero, the experiment is a success, and those savings can be reallocated to “Gap Clusters” where the brand has no organic presence at all. This data-backed approach can save clients thousands of dollars monthly while maintaining their overall market share.

Identifying keyword clusters where competitors are visible but your domain remains invisible is a cornerstone of market analysis. How do you prioritize which gaps to fill first, and what role does authority score play when deciding whether to compete for a high-volume topic?

Priority is given to “High-Volume, Low-KD” clusters where at least two major competitors are ranking in the top 10, but our GSC data shows zero impressions, confirming a total lack of visibility. I group these gaps into topic clusters and rank them by the total aggregate volume of all keywords within that cluster to understand the potential prize. Authority Score (AS) plays a decisive role here; if a competitor has an AS of 70 and we are at 40, I will avoid competing head-to-head for high-volume “head terms” and instead target the “long-tail” opportunities within that cluster. If our AS is comparable or higher, I recommend an aggressive content strategy to displace the competitor, using their top-performing URLs as a blueprint for the quality and depth required to win. This ensures we are only entering battles we have a statistical likelihood of winning based on our current domain strength.

High impressions paired with low click-through rates usually signal a mismatch between user intent and your metadata. What is your method for auditing competitor titles to find a winning edge, and how do you test these changes to ensure they actually drive more traffic?

My method involves isolating pages with over 2,000 impressions but a CTR below 2% in GSC, then using the AI to “crawl” the live SERPs for the primary keywords associated with those pages. I ask the model to summarize the recurring themes in the titles of the top three competitors—such as the use of brackets, years, or specific power words—to see what users are actually clicking on. I then generate three improved title tag options and implement them using an A/B testing framework where I change only the metadata for a subset of pages. Success is measured by a statistically significant lift in CTR over a 14-day period compared to the previous 14 days, adjusted for seasonal fluctuations. If the CTR improves without a drop in average position, I roll the new title format out across the rest of the relevant category.

What is your forecast for AI-driven SEO analysis?

I forecast that SEO will move away from “static reporting” toward “autonomous diagnostics” where AI agents don’t just show you data, but actively suggest and execute changes in real-time. We are entering an era where the competitive advantage will no longer be “having the data,” but the speed at which you can turn that data into a live dashboard that updates itself hourly. As tools like Claude Code and the Model Context Protocol become more integrated, I expect we will see SEOs spending 80% less time on CSV exports and 80% more time on high-level strategic pivots. The ultimate goal is a self-healing website strategy that identifies a ranking drop, analyzes the competitor’s new content, and drafts an optimization brief before a human even logs into the dashboard.