The digital landscape has undergone a profound transformation where appearing in a search engine’s top results no longer guarantees that a piece of content will actually be consumed or acknowledged by the artificial intelligence models that now serve as the primary interface for millions of users. Recent investigations into the behavior of generative AI models have revealed a staggering disparity between the raw volume of information retrieved during a research phase and the specific data points that eventually make it into a final response. While a typical query might trigger an exhaustive search across hundreds of webpages, the vast majority of these discovered sources are discarded almost immediately during the synthesis process. This creates a highly competitive environment where only a small fraction of the web is deemed worthy of citation, suggesting that the criteria for digital relevance have shifted from mere visibility to a much more rigorous standard of informational utility and alignment with the specific needs of the generative processor.

The Structural Dynamics of AI Research

The Disparity Between Visibility and Selection

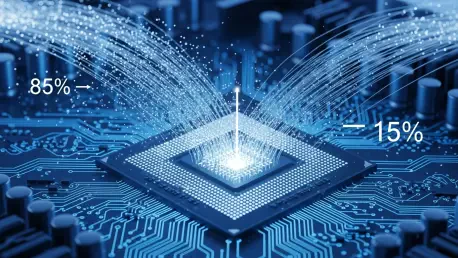

The current state of generative search is defined by what experts call a citation gap, a phenomenon where the AI surfaces a vast number of webpages during its initial research phase but ultimately ignores 85% of them in the final output. Data from extensive trials involving 15,000 unique prompts and over 548,000 retrieved pages indicates that simply being searchable or ranking high on a traditional search engine is no longer sufficient to ensure a brand’s message reaches the end user. This massive filtration process suggests that the AI is not just looking for keywords but is actively evaluating the depth, context, and structural integrity of the content it finds. When only 15% of discovered pages are presented as citations, it highlights a new reality where most content serves merely as background noise or redundant data. The selection process is governed by internal synthesis requirements that prioritize pages which directly answer the nuances of a prompt rather than those that offer broad but shallow coverage.

This high level of attrition in the research phase underscores the importance of quality over quantity in modern digital publishing. For a source to survive the 85% discard rate, it must possess a level of authoritative detail that matches the AI’s internal logic for fact-checking and summarization. The model acts as a rigorous editor, stripping away fluff and focus-shifting material to distill only the most pertinent information for the user’s specific request. Consequently, content creators who once focused on high-volume traffic through generic topics must now pivot toward creating highly specific, data-rich assets that provide the precise context an AI model deems essential for its final drafting stage. Failure to meet these internal quality benchmarks means that even if a page is crawled and indexed by the AI’s search component, it will remain invisible to the user. This shift marks the end of an era where ranking on the first page of a search engine was the ultimate goal for digital marketing professionals.

Internal Queries and Secondary Discovery

One of the most revealing aspects of how generative AI processes information is the widespread use of fan-out queries, which are secondary, internal searches used to refine and validate an initial answer. Analysis shows that nearly 90% of user prompts trigger these follow-up actions, where the AI generates its own specific search terms to fill in gaps in its knowledge or to verify complex claims. Interestingly, about 33% of final citations come exclusively from these fan-out searches, many of which involve hyper-specific queries that have zero traditional search volume on platforms like Google. This means that a significant portion of the sources cited by the AI are found through paths that humans rarely take when searching manually. These internal searches focus on the long-tail nuances of a topic, seeking out niche data or specific technical details that might be buried deep within a document or on a highly specialized website that does not rank for broader terms.

The reliance on these secondary searches illustrates a more sophisticated form of information gathering that bypasses traditional SEO metrics. Because the AI is effectively its own searcher, it creates a demand for content that addresses very specific, low-volume queries that were previously ignored by marketers. These fan-out queries often target validation points or specific how-to steps that require a high degree of precision. For a website to be captured during this secondary phase, it must be structured in a way that allows the AI to easily parse and extract specific data points that solve the sub-problems generated during the model’s reasoning process. This internal logic emphasizes the need for content to be segmented and modular, allowing the AI to “hook” into specific sections that provide the necessary evidence or instructions. This multi-stage selection process creates a secondary layer of competition where the ability to answer a specialized sub-query is just as important as the primary ranking.

Strategic Implications for Content Creators

The Persistence of Search Visibility

Despite the unique and often unpredictable way that artificial intelligence processes information, traditional search engine optimization still remains a vital foundation for achieving citations. There is a strong and measurable correlation between high rankings on traditional platforms and the likelihood of being cited in a generative response. Research indicates that pages appearing in the top 20 search results account for more than half of all cited sources, proving that the AI’s initial discovery pool is still heavily influenced by established algorithms. Furthermore, holding the number-one position on a traditional search results page makes a piece of content 3.5 times more likely to be cited compared to pages that fall outside the top 20. This suggests that while the AI is selective, it still uses traditional authority signals as a primary filter to narrow down the hundreds of thousands of potential sources it could evaluate during its initial research and retrieval phase.

The interaction between traditional rankings and AI citations varies significantly depending on the nature of the inquiry being made by the user. For instance, product discovery searches and practical how-to guides tend to see much higher attribution rates than validation searches where the AI might be looking for a simple confirmation of a fact. In these more complex categories, the AI relies heavily on well-structured, high-ranking pages to provide the comprehensive detail required for a satisfying answer. This data suggests that the transition to an AI-driven web does not mean abandoning traditional best practices but rather augmenting them to meet higher standards of synthesis. Content that already performs well in a standard environment provides the necessary trust signals that encourage the AI to move it from the general retrieval pool into the final, cited output. Maintaining a strong presence in the top tier of search remains the most effective way to enter the research funnel in the first place.

The Evolution Toward Synthesis Optimization

The overarching trend in digital strategy indicates a necessary shift from basic search engine optimization to a more advanced practice known as synthesis optimization. To be featured prominently in AI answers, content must not only be discoverable by automated crawlers but must also provide the precise context and authoritative data that a model deems essential during its final drafting stage. This new reality was demonstrated by a data-driven look at the thousands of prompts that showed appearing in the research phase is merely the first hurdle in a multi-stage selection process. Moving forward, organizations should prioritize the creation of technical documentation, detailed case studies, and structured data sets that are easy for an AI to digest and reproduce. Providing clear, unambiguous answers to both primary and secondary questions will be the key to maintaining visibility as generative models become the primary gateway to information for the general public.

Future strategies should involve a deep analysis of the types of follow-up questions an AI might generate when researching a specific industry or service. By preemptively answering these fan-out queries, creators can ensure that their content remains relevant even when the AI digs deeper into a topic. This approach required a focus on high-density information environments where every paragraph serves a specific utility, rather than filler content designed only for keyword density. Professionals aimed to build repositories of knowledge that functioned as authoritative nodes within the AI’s research network. By aligning content structure with the synthesis requirements of large language models, it became possible to overcome the 85% discard rate. The successful adoption of these techniques allowed brands to remain influential in an environment where the AI acts as both the librarian and the final narrator of the digital world’s collective knowledge.