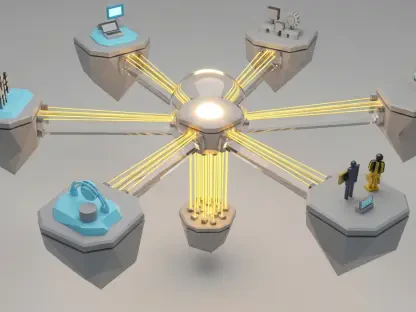

A global leader in SEO, content marketing, and data analytics, Anastasia Braitsik stands at the forefront of the rapidly evolving intersection between paid search and creative technology. As Google integrates sophisticated AI models like Veo and Nano Banana into its advertising ecosystem, the traditional boundaries between technical campaign management and artistic production are blurring. In this discussion, we explore the tactical realities of Google Ads Asset Studio, examining how practitioners can bridge the gap between automated generation and high-performing marketing assets. Our conversation covers the practical navigation of creative constraints, the shifting economics of agency labor, the technical nuances of product fidelity, and the looming legal landscape for synthetic content.

Asset Studio lacks scene-level prompt control and often blocks human faces. How do you navigate these creative constraints to build a compelling narrative, and what specific steps ensure the final video output remains professional and high-quality? Please elaborate with a step-by-step approach to managing these limitations.

The current iteration of Asset Studio can feel like a series of frustrating dead ends when you first attempt to direct a narrative, primarily because the “Veo” version integrated here lacks the advanced API controls found in the stand-alone model. To navigate this, I start by abandoning the hope for complex human-centric storytelling, as the system consistently triggers errors when it detects anything resembling a specific individual or a clear human face. Instead, I focus on a “tight-crop” strategy, selecting assets that showcase hands, partial torsos, or abstract movements that Google’s AI can actually process without flagging. My step-by-step approach involves first curating a library of high-resolution product shots, then testing each through the animation engine to see how Google decides to move the “camera” since we have no manual control over pacing or lighting. To ensure a professional finish, I rely heavily on the preloaded audio library to mask the lack of custom sound design, carefully matching the rhythm of the AI’s generated motion to the available beats. It is a process of trial and error where you must embrace the role of an editor rather than a director, refining the abstract scenes until they coalesce into a clean, ten-second motion ad.

Shifting production duties to paid search managers often leads to unpriced labor like manual logo adaptation and script writing. How should agencies adjust their service contracts to reflect these new creative responsibilities, and what tactics help balance this production work with core campaign management?

For years, the standard operating procedure for a paid search manager was to simply push back on the creative team, demanding vertical versions or better hooks, but Asset Studio has effectively moved the production bottleneck directly onto our desks. This shift represents a fundamental change in ownership where we are now manually adapting logos to various aspect ratios and writing voiceover scripts—tasks that were never historically priced into a standard search management fee. Agencies must respond by revisiting their contract scopes to include “Creative Strategy and Asset Adaptation” as a distinct billable service, rather than treating it as an administrative byproduct of campaign setup. To balance this without burning out, I recommend time-blocking specific “production sprints” where the manager focuses solely on generating and editing these assets, ensuring that this new creative work doesn’t cannibalize the time needed for bid adjustments and keyword optimization. It is about recognizing that while these tools make production faster than a traditional film crew, the human labor required to oversee the “AI slop” and ensure brand alignment is a significant, specialized effort that deserves its own line item.

Tools like Nano Banana 2 allow you to lock product images while reinterpreting the surrounding environment. What criteria do you use to select the five most effective reference images, and which background changes have you found most successful in boosting conversion rates for e-commerce products?

When utilizing Nano Banana 2, the goal is to maintain absolute product integrity while allowing the AI to breathe life into the scene, which requires a very strategic selection of those five reference images. I prioritize images that show the product from distinct angles—front, side, and a forty-five-degree tilt—to ensure the AI understands the three-dimensional volume of the item before it begins reinterpreting the environment. The most successful background transformations I’ve witnessed involve shifting static studio shots into “lifestyle-adjacent” textures, such as placing a tech gadget on a sleek, walnut surface or moving a sneaker into a blurred, high-contrast urban setting. These changes provide a sensory richness that a plain white background lacks, helping the consumer visualize the product in a real-world context without the high cost of a location shoot. By “locking” the product and only altering the surrounding atmosphere, we achieve a high-fidelity look that avoids the uncanny valley often associated with fully generative AI imagery.

Upcoming laws in jurisdictions like New York may soon require disclosures for synthetic performers in advertising. Since current tools use invisible watermarking rather than visible labels, what practical steps can advertisers take to ensure ethical transparency and legal compliance in their video campaigns?

The legal horizon is shifting quickly, particularly with New York’s upcoming law in June 2026 which will mandate clear disclosures for any “synthetic performers” in advertisements. Currently, Asset Studio actually provides a bit of an accidental safety net because its strict limitations on generating human faces mean most users aren’t even able to create the type of content that would trigger these specific performer disclosure requirements. However, for those looking to be proactive and ethical, I suggest looking into Google’s SynthID, which already invisibly tags AI-generated images, providing a layer of provenance that can be audited if compliance questions arise. Since there is currently no “AI sparkle” or visible checkbox within the Google Ads UI to label these ads, advertisers should consider adding a small, unobtrusive text overlay in the final frame of the video if they are using highly realistic generative elements. Staying ahead of these regulations isn’t just about avoiding fines; it’s about maintaining a transparent relationship with an audience that is becoming increasingly skeptical of “AI slop” and synthetic content.

Quickly built templates can sometimes achieve ten times the click-through rate of expensive, professionally produced ads. What messaging elements are most critical when using these automated tools, and how do you use the trim feature to optimize a video’s pacing for YouTube viewers?

It is a striking reality that a 30-minute template-built video can sometimes outperform a high-budget production, largely because these automated tools force a focus on clarity over cinematic fluff. The most critical messaging element is the immediate value proposition; using Asset Studio’s templates allows us to test bold, text-heavy hooks that land within the first two seconds, which is where most professional ads fail by being too slow. I use the “Trim” feature as a surgical tool to cut out the long, cinematic intros often found in client-provided assets, jumping straight to the action or the core product benefit to capture the YouTube viewer’s fleeting attention. By shortening the build-up and using the built-in voiceover tools to reiterate the call to action, we create a sense of urgency and directness that resonates more effectively with modern digital audiences. This approach proves that while production quality is nice, the pacing and the script are the actual engines driving that 10x increase in click-through rates.

What is your forecast for the role of AI-driven creative tools in the digital advertising industry?

I believe we are moving toward a future where the distinction between a “media buyer” and a “creative director” will almost entirely vanish for mid-market accounts, as these tools become the primary interface for campaign execution. While Asset Studio isn’t yet a “game changer” for global brands with massive production budgets, it is rapidly democratizing the ability for smaller players to compete in the video space, effectively killing the excuse that video is too expensive to test. My forecast is that we will see a massive influx of “good enough” content that prioritizes speed and conversion data over artistic perfection, leading to a landscape where the winning advertisers are those who can iterate on scripts and hooks in hours rather than weeks. Ultimately, the role of the expert will shift from “maker” to “curator,” where the human’s most valuable skill is knowing which AI-generated assets to trash and which ones have the strategic soul necessary to actually drive a sale.