The traditional wall between creative imagination and professional-grade video output has effectively crumbled as high-fidelity generative models redefine the baseline for digital communication. In the current landscape, the ability to synthesize complex visual narratives from simple text prompts or structured data is no longer a futuristic curiosity but a foundational requirement for modern marketing and product development. This technological shift represents a departure from the labor-intensive production cycles of the past, moving toward a streamlined ecosystem where speed and scale are limited only by the clarity of one’s instructions. As businesses face an increasingly fragmented attention economy, the adoption of these tools has transitioned from an experimental luxury to a survival strategy for brands attempting to maintain relevance across diverse digital platforms.

The current environment demonstrates that video content is the primary driver of user engagement, yet the cost of traditional filming often prevents companies from localized or personalized outreach. AI-driven video generators address this discrepancy by automating the most time-consuming aspects of the creative process, such as lighting, set design, and even the physical presence of actors. However, the market has expanded so rapidly that the sheer variety of options has created a secondary challenge: the difficulty of selecting a tool that aligns with specific organizational goals. This review aims to dissect the current state of the industry, evaluating the technical underpinnings and practical utility of the leading platforms that are currently reshaping the digital horizon.

The Evolution of AI-Driven Visual Communication

The progression of visual communication technology has moved through several distinct phases, starting with basic motion graphics and culminating in the sophisticated neural networks observed today. Initially, the industry relied on rigid templates and basic automation to speed up production, but these methods lacked the flexibility to create truly original or photorealistic content. The breakthrough occurred with the integration of deep learning and generative adversarial networks, which allowed machines to understand the underlying structure of a video frame rather than just the pixels within it. By training on vast datasets of human movement and cinematic lighting, these systems learned to predict how a scene should evolve over time, leading to the fluid and coherent visuals that characterize modern AI video.

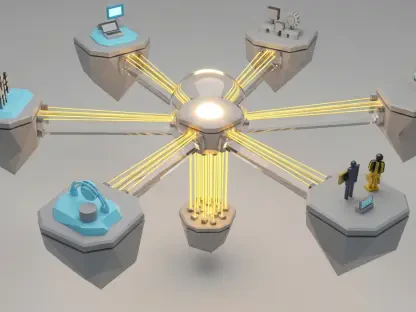

In the current technological landscape, these tools occupy a central role by bridging the gap between static data and dynamic storytelling. They rely on complex architectures, such as latent diffusion models and transformers, to translate semantic meaning into visual representation. This means that a prompt describing a “sunset over a neon cityscape” is processed through layers of understanding that account for atmospheric depth, reflection, and the specific aesthetic of cyberpunk photography. This evolution has moved video production out of the specialized studio and into the hands of product managers and marketers, fundamentally democratizing the ability to create high-impact visual assets.

Furthermore, the emergence of this technology has forced a reevaluation of the value chain in digital media. Where once a professional camera crew was the gatekeeper of quality, the emphasis has now shifted toward prompt engineering and strategic direction. This does not eliminate the need for human creativity; rather, it amplifies it by removing the mechanical friction associated with traditional filming. As we look at the progress made from 2026 to 2028, the focus remains on improving temporal consistency, ensuring that every frame in a generated sequence maintains the same logic and detail as the one before it.

Specialized Categories of AI Video Generation

Avatar-Led Explainer Platforms

One of the most mature branches of this technology involves the creation of synthetic presenters that can deliver scripts with remarkable human-like accuracy. These platforms utilize advanced lip-syncing algorithms and facial mapping to ensure that an AI-generated avatar moves in perfect harmony with a given audio track. This performance is not merely about moving a mouth in time with words; it involves the subtle manipulation of micro-expressions, such as eye blinks and head tilts, which prevent the viewer from falling into the uncanny valley. The technical achievement here lies in the seamless blend between a pre-recorded digital likeness and a dynamically generated speech pattern.

The significance of these avatar platforms in the enterprise world is profound, particularly for companies that need to produce training materials or customer support videos in multiple languages. Instead of hiring different actors for every region, a brand can use a single digital twin and translate the script into dozens of dialects. The system then automatically adjusts the avatar’s performance to match the phonemes of the new language. This level of localization was previously impossible to achieve at scale, and it represents a massive shift in how global organizations maintain a consistent brand voice across disparate markets.

Text-Based Video Editing and Iteration

Another essential component of the modern AI video toolkit is the paradigm of editing through text. This technology functions by transcribing video content into a written document, allowing users to modify the visual and audio elements simply by deleting or rearranging words in the transcript. This approach eliminates the need for a traditional timeline-based editor, which often requires significant technical training to master. By treating video as a text-based asset, teams can iterate on their content with the same speed they would use to edit a blog post or an email.

This functionality is particularly transformative for long-form content like webinars or interviews. If a speaker makes a mistake or includes irrelevant information, the editor can simply strike that sentence from the text, and the AI will automatically stitch the video frames together to create a smooth transition. Some platforms even offer the ability to generate “overdub” audio, where the system uses a cloned version of the speaker’s voice to insert new words into the video without requiring a reshoot. This performance characteristic makes it an indispensable tool for fast-moving product teams who need to update their tutorials frequently as software features evolve.

Generative Visuals and Cinematic Storytelling

At the high end of the creative spectrum, generative visuals use diffusion models to build entire worlds from scratch. These tools do not rely on existing footage or avatars; instead, they generate every pixel based on a conceptual prompt. The performance of these models is measured by their fidelity to the laws of physics and their ability to maintain artistic style throughout a sequence. For example, a cinematic storytelling tool might be tasked with creating a dreamlike sequence for a luxury brand, requiring a specific palette, lighting, and camera movement that mimics the work of a professional cinematographer.

The use of high-fidelity generative AI allows brands to create emotional experiences that were once reserved for high-budget television commercials. Because the AI can simulate complex textures like fur, water, and fabric with extreme detail, it is now possible to produce atmospheric content that feels visceral and authentic. This category of tool is less about explaining a technical feature and more about establishing a mood or a brand identity. It provides a way for creative directors to experiment with visual concepts that would be too expensive or physically impossible to film in the real world.

Rhythmic and Audio-Visual Layering

In the realm of short-form content, the synchronization between sound and sight is the key to maintaining user retention. Specialized tools now focus on the rhythmic aspect of video production, ensuring that transitions and visual effects are perfectly timed to the beat of a musical track. This is particularly relevant for social media platforms like TikTok and Instagram, where the flow of the video is often just as important as the message itself. By using audio-analysis algorithms, these systems can automatically detect “drops” or tempo shifts in a song and align the visual cuts accordingly.

This layering process maximizes the psychological impact of the content, as the human brain is naturally drawn to rhythmic patterns. When a video “hits” on every beat, it creates a sense of polish and professionalism that keeps the viewer from scrolling past. For marketers, this means that even a simple product showcase can be turned into an engaging, high-energy clip with minimal manual effort. The importance of this technical capability cannot be overstated in an era where the first three seconds of a video determine whether a campaign succeeds or fails.

Current Trends in Scalability and Personalization

The most significant trend currently influencing the trajectory of AI video is the move toward hyper-personalization at scale. In previous years, a brand might create one high-quality video for its entire audience, but today’s technology allows for the creation of thousands of unique variants. By integrating video generation with customer databases, companies can now produce content that addresses a viewer by name, references their past purchases, or offers a specific recommendation based on their browsing history. This shift in consumer behavior toward expecting personalized experiences is forcing a change in how content is produced and distributed.

Another emerging development is the integration of AI video tools directly into existing productivity stacks. Rather than being standalone platforms, these capabilities are being embedded into customer relationship management systems and e-commerce backends. This allows for a more automated workflow where a video is triggered by a specific user action, such as signing up for a service or abandoning a shopping cart. The innovation here is not just in the video itself, but in the infrastructure that allows it to be generated and delivered in real time without human intervention. This trend is moving the industry toward a future where “one-size-fits-all” content is considered obsolete.

Real-World Applications in Product Experience and E-Commerce

Real-world applications of AI video are most visible in the e-commerce sector, where the technology is used to create dynamic product pages. Instead of relying on static images, retailers can now generate short video clips for every item in their catalog, showing the product in different environments or highlighting its key features in action. This implementation has a direct impact on conversion rates, as it provides a more comprehensive understanding of the product’s value. For instance, a clothing brand might use AI to show how a single dress looks on various body types or in different lighting conditions, all without conducting a single photoshoot.

In the software industry, AI video is being deployed to enhance the onboarding experience for new users. Instead of a generic welcome email, a user might receive a personalized video walkthrough of the specific features they are most likely to use. This unique use case helps to reduce the initial learning curve and increases long-term user retention. By transforming a static documentation manual into an interactive visual guide, companies can ensure that their customers feel supported from the very first moment of interaction. These applications demonstrate that the technology is not just about entertainment; it is a critical tool for improving the overall product experience.

Challenges and Technical Limitations

Despite the rapid progress, the technology faces several technical hurdles, most notably the issue of factual accuracy and “hallucinations.” Because these models are based on probability rather than a database of facts, they can sometimes generate visuals or audio that are technically incorrect or nonsensical. For a product video, this could mean showing a feature that does not exist or providing incorrect instructions. Therefore, human oversight remains a mandatory part of the production process to ensure that the final output aligns with reality.

Regulatory issues and ethical concerns also present significant obstacles to widespread adoption. The ability to create realistic digital likenesses raises questions about consent and the potential for misuse in creating deepfakes. Furthermore, the copyright status of AI-generated content remains a subject of intense legal debate. Many companies are hesitant to fully commit to AI production until there is a clearer framework regarding the ownership of the resulting assets. These market obstacles are currently being addressed through the development of digital watermarking and stricter verification protocols, but they remain a point of friction for many risk-averse organizations.

The Future of Automated Digital Storytelling

Looking toward the future, the most exciting development in the field is the move toward real-time, interactive video generation. We are approaching a point where the distinction between a pre-recorded video and a live digital interaction will blur. Imagine a customer support scenario where a user interacts with a video avatar that responds to their questions in real time, with the visual and audio components generated on the fly. This breakthrough would represent the ultimate evolution of personalized communication, turning a passive viewing experience into an active dialogue.

The long-term impact of this technology will likely be the complete democratization of high-end cinematic production. As these tools become more intuitive and the computational costs decrease, the ability to tell complex, visually stunning stories will no longer be limited by a person’s technical skills or budget. This will lead to a massive explosion of creative content across all sectors of society, from education and entertainment to corporate communication. The future of digital storytelling is one where the only barrier to entry is the quality of the idea itself, marking a fundamental shift in how we share information and connect with one another.

Assessment of the AI Video Landscape

The analysis of the current AI video landscape revealed a market that has transitioned from novelty to necessity. The technology matured to a point where it offered genuine utility across various industries, particularly in e-commerce and enterprise communication. The review found that the most successful implementations were those that matched the specific tool to the intended outcome, whether that meant using avatars for localization or generative models for emotional branding. The flexibility of these systems allowed for a level of scalability that was previously unimaginable, enabling brands to produce high volumes of content without sacrificing quality.

The review also showed that while the technical capabilities are impressive, the human element remained indispensable for ensuring accuracy and ethical integrity. The limitations regarding hallucinations and legal rights highlighted the need for a balanced approach to automation. However, the overall trajectory of the industry remained overwhelmingly positive, with significant breakthroughs on the horizon in real-time interaction and hyper-personalization. For businesses looking to stay competitive, the verdict was clear: integrating AI video into the communication strategy was no longer optional.

In the final assessment, the impact of AI video generation on the digital content industry was found to be transformative. The shift toward automated storytelling improved the speed of production and opened up new possibilities for creative expression. As the technology continues to evolve, it will likely become the primary medium for all digital interactions, providing a more engaging and accessible way to share knowledge. The transition to AI-driven video production represented a fundamental milestone in the history of communication, setting the stage for a future where the digital experience is more dynamic, personalized, and visually rich than ever before.