The modern search engine optimization landscape has reached a point where a digital strategist can condense an entire week of manual spreadsheet labor into a twenty-minute technical exercise. By feeding a pair of comprehensive data exports into a large language model like Claude or ChatGPT, an analyst can generate a polished report complete with intricate topic clusters, gap analysis tables, and prioritized content briefs. This shift represents a monumental leap in efficiency, yet it introduces a dangerous paradox where the speed of execution often outpaces the depth of the underlying strategy. The polished nature of these automated outputs can create a false sense of security, leading businesses to invest thousands of dollars into content campaigns that look statistically sound but fail to address the nuances of actual buyer intent or market positioning.

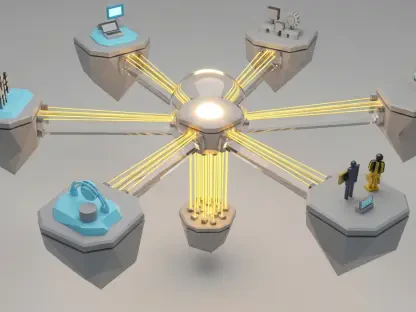

Success in the current era of search requires moving beyond the simple mechanics of prompting to develop a structured, data-first workflow that transforms artificial intelligence from a basic summarization tool into a high-leverage strategic asset. While the software can organize and summarize massive datasets with ease, it lacks the innate ability to determine which keywords drive actual revenue and which merely generate informational noise. A guide that relies solely on automation is a guide that risks walking off a cliff of vanity metrics. Therefore, the focus must shift from how fast an analysis can be performed to how accurately the data can be interpreted to drive business growth. The following exploration details how to master this transition, ensuring that every automated insight is grounded in rigorous human validation and real-world market intelligence.

Why Your 20-Minute AI Analysis Might Be a Strategic Liability

The primary danger of modern automation in the search industry lies in the “plausibility trap,” a phenomenon where the output of a language model looks exceptionally confident and professional while lacking the necessary nuance of human business strategy. When an artificial intelligence processes a list of thousands of keywords, it applies mathematical patterns to group them into categories, but it does not understand the difference between a high-volume keyword that attracts curious window shoppers and a low-volume keyword that signals a ready-to-buy customer. For instance, a model might suggest prioritizing a topic cluster around “how to install a roof rack” because the search volume is high, ignoring the fact that the company’s primary goal is to sell premium, pre-installed cargo systems. This disconnect creates a strategic liability where a brand may win the battle for traffic while losing the war for conversion and return on investment.

Moreover, the apparent perfection of automated tables and charts can mask significant gaps in the raw data itself. AI assistants are trained to be helpful, which often leads them to “hallucinate” or fabricate information when they encounter gaps in the provided dataset. If an analyst asks for a traffic estimate without providing a direct export from a tool like Semrush or Ahrefs, the machine will likely provide a plausible-sounding number based on historical training data that is likely outdated or entirely irrelevant to the specific niche. This reliance on the confident tone of the machine, rather than the hard reality of the numbers, leads to a situation where strategic decisions are built on a foundation of digital sand. The risk is not just in getting the wrong answers, but in not realizing the answers are wrong until the marketing budget has already been depleted on ineffective campaigns.

Furthermore, a rapid-fire analysis often fails to account for the competitive momentum and the structural health of the pages it evaluates. A static list of ranking positions does not tell the analyst whether a competitor is on a downward trend due to a recent algorithm update or if they are aggressively gaining ground through a sophisticated backlink campaign. An automated summary might show that a competitor ranks in the top three for a valuable term, but it cannot inherently sense the “content decay” that might be making that page vulnerable to a well-timed counter-attack. Without the layer of human critical thinking to assess the trajectory of the data, the twenty-minute analysis remains a snapshot of the past rather than a roadmap for the future. True competitive advantage comes from identifying these subtle shifts in the landscape that the machine is programmed to overlook in favor of broad generalizations.

The Shift from Data Gathering to Strategic Interpretation

In the traditional search engine optimization landscape, the vast majority of a competitor analysis was consumed by the grueling manual work of categorization, pattern matching, and the construction of pivot tables. Analysts would spend hours, if not days, tagging individual URLs by hand to understand how a rival site structured its information architecture. Today, the availability of advanced language models has shifted the primary bottleneck of the workflow from data processing to data validation. The heavy lifting of organization is now handled in seconds, which allows the human strategist to occupy a more elevated role. The focus has moved away from the “what” of the data toward the “so what” of the strategic implications, requiring a higher level of intellectual rigor to ensure the results align with broader commercial objectives.

Understanding the “content strategy signature” of a competitor is a prime example of this new high-level focus. By using automation to quickly identify whether a rival leads with deep editorial guides, utility-based tools like calculators, or transactional product category pages, an SEO professional can discern the underlying philosophy of a competitor’s success. For example, if a rival is dominating the search results through a massive library of “how-to” articles rather than product pages, it signals a strategy built on capturing top-of-funnel informational intent to build brand authority. This insight, which previously required an exhaustive manual audit, can now be surfaced in minutes. The strategist’s job is then to decide whether to compete head-to-head on that same turf or to find a “blue ocean” opportunity where the competitor has left a gap in transactional coverage.

This shift necessitates a change in the skillset required for top-tier search performance. It is no longer enough to be proficient with software and spreadsheets; the modern practitioner must be a master of “algorithmic skepticism.” This involves the ability to look at an AI-generated topic taxonomy and immediately question why certain clusters were formed and whether those clusters reflect the reality of the buyer’s journey. As the time required to generate a report shrinks, the value of the professional shifts entirely to their ability to interpret that report and turn it into a list of actionable, high-impact tasks. The goal is to spend less time moving cells around in a document and more time analyzing the competitive environment to find the specific point of leverage that will result in a measurable increase in market share.

Dissecting the Competitor Landscape Through Classification and Clustering

The foundation of a truly reliable and strategic analysis begins with the extraction of raw, unadulterated data from industry-standard tools to prevent the aforementioned hallucinations. To build a trustworthy map of the competitive environment, a practitioner should pull comprehensive exports including organic pages, current keyword positions, and estimated traffic momentum. These reports provide the numerical truth that an AI assistant needs to stay grounded. By feeding these specific exports—such as the top one hundred pages by traffic and the top five hundred keywords by volume—into an assistant, the analyst can request a sophisticated topic taxonomy. This process involves the machine assigning each URL to a specific category, such as “Product – Roof Racks” or “Editorial – Buying Guides,” based on the URL path and the context of the ranking keywords.

This classification process reveals the structural differences that define why one site succeeds while another struggles. For instance, a detailed clustering exercise might uncover that a dominant competitor is pulling sixty percent of its traffic from a single utility tool, such as a “towing capacity calculator,” rather than its primary product pages. Without this level of granular dissection, a brand might mistakenly believe they need to write more blog posts to catch up, when in reality, they need to develop a competing piece of functional software. The machine’s ability to group thousands of data points into these thematic buckets allows the strategist to see the “forest” of the competitor’s site architecture without getting lost in the “trees” of individual keyword rankings. This panoramic view is essential for identifying where a competitor’s traffic actually originates and how it flows through their conversion funnel.

Furthermore, comparing these taxonomies across multiple competitors allows a brand to identify its unique “content strategy signature” relative to the market. One competitor might be a “product-led” entity with a site dominated by transactional category pages, while another might be an “authority-led” entity that relies on high-volume informational guides. By visualizing these differences in a side-by-side table, a strategist can see where the market is oversaturated and where it is underserved. If every major player is fighting over the same set of transactional keywords, but none of them have addressed the common technical questions that customers ask before buying, a clear opportunity for an “editorial-led” capture strategy emerges. This systematic approach to classification ensures that the resulting strategy is based on the actual mechanics of the search results rather than a series of disconnected guesses.

The Human Override: Why Algorithmic Patterns Require Expert Validation

Research into the efficacy of automated workflows consistently shows that roughly fifteen percent of all AI-generated URL classifications require manual correction from a human expert. Language models are prone to mislabeling pages based on their URL structure alone; for example, a page with the path “/blog/best-off-road-accessories/” might be tagged as a simple blog post when it is actually a highly optimized commercial comparison guide designed for transactional intent. If a strategist relies on the machine’s initial classification without verification, they may overlook a critical piece of commercial content that is driving high-value conversions for a rival. This “human override” is the essential quality control step that prevents a flawed dataset from poisoning the entire strategic plan, ensuring that the final recommendations are based on the true nature of the content.

Beyond simple classification errors, artificial intelligence lacks visibility into the real-world environment of the search engine results pages. A machine sees a ranking position of “three” as a numerical value in a vacuum, but it does not see the shopping carousels, local map packs, and video results that may be absorbing eighty percent of the clicks on that specific page. An expert strategist knows that a position-one ranking for a high-volume term might be worthless if the page is buried under three layers of paid advertisements and interactive features. This level of contextual awareness is something that currently exists only in the human domain. Expert judgment is required to bridge the gap between “high-volume opportunities” on paper and “business-aligned priorities” in reality, filtering out keywords that are technically winnable but strategically useless.

Expert validation also extends to the interpretation of keyword intent, which is frequently more complex than a simple “informational” or “transactional” tag. A keyword like “best overlanding gear” might sit on the fence between a shopper looking for inspiration and a buyer ready to purchase a specific kit. A human analyst can look at the current search results and see that Google is favoring long-form comparison videos over traditional product pages, which dictates a completely different content approach than a machine might suggest. By applying a layer of professional skepticism to every automated insight, the strategist ensures that the company’s resources are directed toward content that addresses the actual needs of the user at a specific point in their journey. This marriage of machine speed and human wisdom is what creates a truly resilient search strategy.

A Practical Framework for Executing High-Impact Gap Analysis and Content Briefs

Transforming a raw list of missing keywords into a functional growth strategy requires a tiered approach that prioritizes opportunities based on their relevance to the business rather than just their raw search volume. Instead of attempting to rank for every keyword a competitor holds, a practitioner should use AI to cluster these “gaps” into thematic tiers. Tier one should consist of core commercial drivers that align perfectly with the site’s primary product offerings; these are the “must-win” battles that directly impact the bottom line. Tier two might include adjacent commercial topics that support the broader market, while tier three is reserved for informational authority builders that earn links and build trust but may not convert immediately. This tiered system prevents the common mistake of chasing high-volume informational traffic at the expense of high-value transactional leads.

Once the gaps are identified and tiered, the focus must shift to the creation of content briefs that go beyond simple keyword inclusion. Using the identified clusters, an analyst can prompt an AI assistant to draft a brief that outlines the necessary ## structures, target keywords, and content elements found on successful competitor pages. However, the most critical part of this brief is the “differentiation angle”—a section that must be heavily influenced by human insight. To win in a crowded search market, a new page cannot just be a “better” version of what already exists; it must offer a unique perspective, first-hand experience, or a data-backed insight that the competitor lacks. This ensures that the new content meets the high standards of Google’s experience, expertise, authoritativeness, and trustworthiness guidelines, which are increasingly difficult for purely AI-generated content to satisfy.

The final stage of this framework involves a rigorous cannibalization check to ensure that new content initiatives do not undermine existing site authority. By cross-referencing the proposed keyword targets against the site’s current ranking pages, a strategist can identify if an existing page is already “fighting” for the same terms. In many cases, the best course of action is not to create a new page, but to optimize and expand an existing one. This architectural foresight prevents the creation of a “content graveyard” where multiple pages on the same site compete for the attention of the search engine, ultimately diluting the ranking power of both. By following this structured, validated, and tiered process, a brand can turn the raw power of AI-assisted analysis into a sophisticated engine for sustainable organic growth, ensuring every new page published has a clear purpose and a distinct competitive edge.

The integration of advanced language models into the competitive analysis workflow has fundamentally altered the economics of search engine optimization, making high-level insights accessible at unprecedented speeds. However, as the technical barriers to data processing have fallen, the importance of human strategic oversight has only increased. The practitioners who will find the most success in the coming years are not those who use AI to replace their thinking, but those who use it to accelerate their ability to find and act upon meaningful market patterns. By maintaining a healthy skepticism toward automated outputs and prioritizing business relevance over vanity metrics, an organization can navigate the complexities of the modern search landscape with precision. Ultimately, the goal is to build a digital presence that is not only visible to search engines but also indispensable to the users who rely on them for guidance, value, and solutions.

The transition toward an AI-augmented workflow required a complete re-evaluation of how data was traditionally perceived within the marketing department. Analysts moved away from the tedious task of manual sorting and instead embraced the role of strategic architects who curated the inputs and validated the outputs of their digital assistants. This shift allowed for a more dynamic response to competitor movements, as the time saved on data entry was redirected toward creative problem-solving and deeper market research. As a result, the strategies developed during this period were characterized by a much higher degree of agility, enabling brands to pivot their content focuses in real-time as new search trends emerged. The focus on human-led differentiation ensured that the content remained engaging and unique, even in a landscape increasingly crowded by automated noise.

In the final assessment, the most effective competitive analyses were those that treated artificial intelligence as a sophisticated intern rather than an infallible oracle. Every table generated and every cluster suggested underwent a rigorous review process that checked for alignment with the brand’s core identity and commercial goals. This disciplined approach prevented the common pitfalls of the “plausibility trap” and ensured that every recommendation was grounded in the reality of the search engine results pages. By the time the final reports reached the decision-makers, they were no longer just collections of data; they were comprehensive, battle-tested roadmaps for market dominance. The practitioners who mastered this balance between technology and intuition set a new standard for excellence in the field, proving that the future of search was not about the tools themselves, but about the wisdom with which they were applied.