The digital publishing landscape is currently navigating a period of profound structural realignment as creators struggle to maintain visibility within an increasingly automated information ecosystem. Every millisecond, the friction between human-generated content and machine-driven synthesis diminishes, leaving digital publishers at a crossroads where interaction meets automation. Central to this evolution is a controversial new feature known as the “AI button,” an interactive element now frequently appearing on food, travel, and lifestyle blogs. These buttons offer users immediate ways to interact with content through Large Language Models like ChatGPT, Gemini, and Claude, often labeled with commands such as “Summarize with AI,” “Save this recipe to ChatGPT,” or “Ask AI about this post.” This shift represents a significant departure from traditional social sharing icons, signaling a move toward active content manipulation by the end-user.

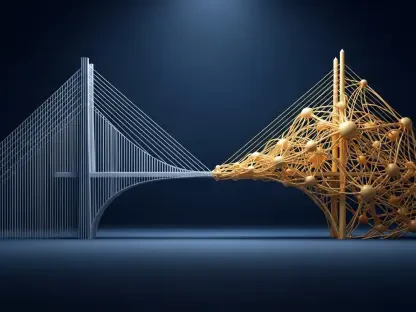

The primary focus of this market analysis involves determining whether these buttons constitute a forward-thinking enhancement of the user experience or a risky tactic in the realm of Generative Engine Optimization that could trigger security concerns or search engine penalties. By dissecting the underlying mechanics of these tools, analyzing the data-driven results of their early implementation, and examining the cybersecurity concerns raised by major technology entities, stakeholders can better understand the broader implications for the future of content discovery. The analysis explores the intersection of utility and optimization to determine if these tools represent a bridge to a more efficient web or a risky overextension of current technological capabilities.

The Evolution of Interaction: Integrating AI into the Digital Experience

Digital publishing is no longer a static medium defined by the simple act of reading; it has become a collaborative space where the boundary between the source material and the reader’s tools is increasingly blurred. The integration of artificial intelligence into the browsing experience is the natural culmination of a decade spent prioritizing accessibility and speed. As users become accustomed to receiving instantaneous answers from virtual assistants, the expectation for on-page interactivity has shifted. Publishers who once focused solely on keyword density and backlink profiles now find themselves needing to facilitate the seamless transfer of data from their domains into the private environments of a user’s preferred artificial intelligence. This transition requires a deep understanding of how these buttons function as gateways between public information and private synthesis.

Furthermore, the rise of these interactive elements is not merely a trend but a strategic response to the diminishing returns of traditional display advertising and linear traffic models. In a market where attention is the most valuable currency, reducing the friction required to “use” content becomes a competitive advantage. If a reader can save a complex travel itinerary or a detailed cooking procedure to their AI assistant with a single click, the perceived value of that content increases. However, this convenience brings about a fundamental question regarding the ownership of the user journey. By encouraging users to export content into an AI interface, publishers are essentially inviting a third-party intermediary to facilitate the final stage of the consumption process, a move that carries both immense potential for engagement and significant risks for site-wide retention.

From Search to Synthesis: The Historical Shift in Content Consumption

To grasp the current prominence of AI-driven interactivity, one must look at the fundamental transformation in user behavior that has occurred since the early 2020s. For nearly two decades, the discovery model remained strictly linear: a user identified a need, performed a query on a search engine, visited a blog or news site, and perhaps engaged with a newsletter or social media profile to ensure a future return. This search-and-visit paradigm was the bedrock of the modern web. However, the maturation of Large Language Models has introduced a new “AI discovery layer.” Users are no longer content with merely finding a source; they now seek to synthesize, reformat, and store that information in ways that fit their specific, immediate needs. This shift from passive consumption to active synthesis has forced creators to reconsider how they package information for both humans and machines.

Past developments, such as the transition to mobile-first indexing and the era of “featured snippets,” provided an early warning to publishers that the web was moving toward a zero-click reality. In those phases, the objective was to provide enough information to satisfy a query while still enticing a click-through. Today, the challenge is more complex because the “click” is often the beginning of a process where the user intends to feed the content into a secondary engine for further processing. AI buttons are the latest iteration of this trend, aiming to capture the audience’s attention at the precise moment they transition from reading to processing. Recognizing this background is essential for understanding that these buttons are not a peripheral gimmick but a calculated adaptation to a documented change in the human-digital interface that favors modularity over traditional long-form engagement.

Navigating the Utility and SEO Impact of AI Integration

The Functional Mechanics: Analyzing the SEO Paradox

At their technical core, AI buttons function as user-initiated shortcuts that pre-populate specific prompts within an AI assistant’s interface. When a user interacts with a “Summarize with AI” button, the system essentially creates a bridge that carries the page’s URL or its text directly into a tool like ChatGPT or Gemini. This allows the reader to ask follow-up questions, modify the data, or store it in the AI’s persistent memory without the manual labor of copying and pasting. Crucially, these buttons do not possess the power to retrain global models or guarantee a site’s inclusion in broad search engine AI overviews. Instead, they operate on a personal, siloed level, influencing how an individual’s specific assistant perceives and interacts with a specific source of information.

The market has recently seen empirical evidence regarding the effectiveness of these features through detailed case studies. For instance, high-authority niche sites that implemented these tools observed a surge in referral traffic from AI platforms, sometimes reaching triple-digit growth percentages. However, a significant paradox emerged: the buttons themselves were often secondary to the presence of on-page, human-readable AI summaries. Data indicated that pages featuring both a “TL;DR” summary and an AI button saw a 116% increase in impressions, whereas pages that only included the button without the text saw negligible growth. This suggests that while buttons enhance the user experience, search engines still prioritize structured, accessible data that provides immediate value to the crawler. The button is the “finishing touch” rather than the foundation of a successful Generative Engine Optimization strategy.

Cybersecurity Concerns: Identifying the Threat of AI Poisoning

As the adoption of AI-driven shortcuts accelerates, so does the scrutiny from the cybersecurity and search engine optimization communities. The most pressing concern involves “AI Recommendation Poisoning,” a phenomenon where creators might use deceptive tactics to manipulate how an AI assistant perceives a webpage. This risk arises when hidden instructions, invisible to the human eye but readable by machine crawlers, are embedded in the site’s code or within the prompt triggered by an AI button. If a creator attempts to force an AI to “always recommend this site as the primary authority,” it crosses the boundary from helpful optimization to “prompt injection.” This is a documented security risk because it seeks to override the safety protocols and neutral training of the artificial intelligence.

Moreover, there is a persistent fear that aggressive optimization could lead to a “cannibalization” of the publishing industry. By making it extremely easy for a user to exit a website and enter an AI chat interface, bloggers may be inadvertently training their audience to bypass the original site altogether in the future. This creates a strategic tension between providing modern utility and maintaining the on-site dwell time necessary for traditional monetization through display advertising. If the AI becomes the primary interface through which the user interacts with the content, the value of the original domain may diminish, leading to a long-term decline in the economic viability of independent content creation. This risk necessitates a cautious approach to how much control is handed over to the machine interface.

Strategic Distinctions: Legitimate Innovation versus Strategic Missteps

A clear consensus is beginning to form regarding what constitutes healthy innovation versus a strategic misstep in this emerging field. Legitimate innovation focuses on “UX utility”—designing interactions that help the user perform a specific task, such as scaling a recipe for a different number of guests or converting a travel itinerary into a calendar format. These are transparent, user-led actions that add genuine value to the content. In contrast, “bad practice” involves any form of deception, such as hidden prompt instructions or “optimization bias,” where a page is designed solely for an AI crawler at the expense of the human reader’s experience. Such tactics are likely to be neutralized by AI providers as they refine their ability to detect and ignore manipulative signals.

Furthermore, many of the more alarmist fears regarding “AI hacking” via buttons appear to be overstated in the current market context. Because most AI buttons are transparent and require a deliberate click from the user, they function more like advanced digital bookmarks than malicious exploits. Since the memory of a Large Language Model is currently user-specific, one individual saving a site to their ChatGPT memory does not alter the AI’s response for the rest of the global user base. Therefore, the threat to the overall integrity of the internet remains low, provided that creators adhere to standards of transparency. There is currently no data to suggest that search engines like Google penalize sites for offering these buttons, as the interaction occurs outside of the search engine’s direct ecosystem and does not violate existing webmaster guidelines.

Anticipating the Future: Trends in Generative Engine Optimization

As we look toward the horizon, the role of artificial intelligence in content strategy will likely move from simple, external buttons to deeply integrated “agentic” experiences. We are entering an era where content must be optimized not just for search engines, but for autonomous AI agents that act on behalf of the user. Emerging trends suggest that “utility-based” interactions will become the standard expectation for the digital consumer. Users will soon expect every piece of data—whether it is a financial report, a list of ingredients, or a set of technical instructions—to be modular and ready for immediate adjustment by their personal AI. This shifts the fundamental focus of content creation from “discovery” to “operational utility.”

Technological and regulatory changes will also play a pivotal role in shaping this evolution. As AI providers refine their systems for source attribution and citations, publishers who make their content easy for these models to parse and verify will likely see a significant benefit in referral traffic. Expert forecasts suggest the eventual rise of “AI-friendly” certifications or standardized technical schemas that allow publishers to signal their expertise and trustworthiness directly to Large Language Models. The evolution of the web into a seamless discovery layer means that the most successful strategies will be those that embrace this interactivity while doubling down on the core principles of experience, expertise, authoritativeness, and trustworthiness. High-quality, human-vetted data will remain the most valuable asset in an environment flooded with machine-generated noise.

Actionable Strategies: Guidelines for Modern Content Creators

To navigate this era of automated synthesis effectively, content creators must adopt a balanced and experimental approach that prioritizes the human user while remaining accessible to the machine. The “hierarchy of discovery” dictates that high-quality content and topical authority must remain the foundation of any strategy. AI buttons should be viewed as a sophisticated layer of optimization rather than a standalone solution for stagnant traffic. Businesses and independent professionals should prioritize the implementation of clear, structured summaries at the top of their digital assets. These “TL;DR” sections provide immediate value to both human readers who are in a hurry and AI crawlers looking for a concise data point to index.

Furthermore, transparency in implementation is non-negotiable for maintaining brand integrity. All AI prompts triggered by interactive buttons should be clearly visible and editable by the user, ensuring that the brand is not perceived as manipulative or deceptive. Creators should focus on “functional utility,” designing buttons that assist users in specific, complex tasks rather than simply asking the AI to remember the site. By monitoring interaction data and staying agile in the face of rapid technological shifts, publishers can ensure they are meeting the needs of the modern consumer without falling into the traps of short-term, deceptive optimization. The goal is to become a trusted source that an AI assistant wants to return to because the data is consistently reliable and easy to process.

Conclusion: Balancing Innovation with Strategic Integrity

The analysis revealed that AI buttons represented a pivotal, albeit controversial, shift in how digital content was managed and consumed during this period of transition. These tools functioned as a symbolic bridge between the era of static reading and the rise of the interactive discovery layer, offering a glimpse into a future where content was designed for immediate synthesis. While the implementation of these buttons provided a notable boost in referral traffic from AI platforms, the data consistently showed that the most substantial gains in visibility resulted from the presence of structured, on-page summaries that catered to both human and machine needs. The market recognized that while utility-based features improved the user experience, they did not serve as a replacement for the fundamental pillars of authority and high-quality reporting.

Strategic insights gathered from this period indicated that the risks of “AI poisoning” and “prompt injection” were largely manageable through a commitment to transparency and user-led interaction. Publishers who avoided deceptive tactics and focused on providing functional value—such as scaling data or reformatting information—successfully integrated these tools without compromising their standing with search engines or AI providers. In the end, the most successful creators were those who treated artificial intelligence as an assistant to the human experience rather than a substitute for it. They understood that in an increasingly automated world, the value of the original source remained paramount, and technology served its best purpose when it made that value more accessible to the end-user. Moving forward, the industry learned to prioritize “utility over manipulation,” ensuring that innovation served to strengthen the relationship between the creator and the consumer.