The subtle click of a mouse in a quiet office now carries more weight than ever before, as a single exclusion in a search campaign can instantly recalibrate millions of dollars in algorithmic spending. In the current digital marketing landscape, negative keyword management has moved far beyond the tedious cleanup tasks that once defined the industry. Today, every exclusion serves as a sophisticated strategic communication to platform bidding engines, dictating exactly how machine learning should interpret search intent. As automation takes a more dominant role in ad delivery, negative keywords represent one of the few remaining levers advertisers can pull to protect profit margins and define their ideal audience. In this high-stakes environment, the way an account manager handles exclusions directly determines whether the platform’s AI works for or against the business objectives.

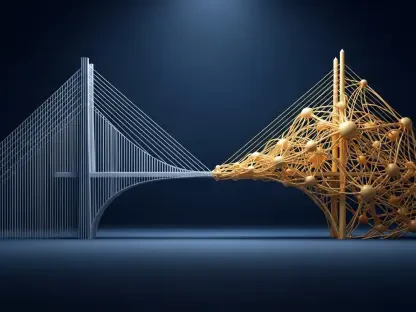

This evolution is not merely a technical update but a fundamental shift in how humans and machines collaborate. Advertisers are no longer just “filtering” traffic; they are shaping the very signals that guide neural networks. When an exclusion is added, it informs the system that a specific cluster of data points does not represent a valuable customer. This prevents the algorithm from chasing irrelevant “ghost” conversions that might look good on a dashboard but fail to impact the bottom line. Understanding this dynamic is the difference between a campaign that burns through capital and one that scales with surgical precision.

From Administrative Cleanup to Algorithmic Signal-Shaping

The modern objective of negative keyword strategy is “sculpting,” a meticulous process of ensuring a perfect alignment between a user’s search query, the ad creative, and the landing page experience. When this trifecta is synchronized, it produces high click-through rates and superior Quality Scores, which in turn lower the Cost Per Click and improve overall campaign health. This harmony is the bedrock of profitability. Modern practitioners must view negative keywords as a primary tool for maintaining this balance, replacing the static mentalities of previous years with a dynamic, decision-based strategy that evolves alongside the market.

Failing to maintain this alignment leads to wasted expenditure and sends poor signals to the algorithm, teaching it to chase irrelevant traffic under the guise of broad matching. If the system is not told what to avoid, it will naturally explore wider and wider circles of intent to satisfy spending requirements. Consequently, sculpting is no longer an optional luxury for high-end agencies; it is the new standard for anyone serious about maintaining a healthy account. By proactively removing the noise, advertisers allow the “signal” of high-intent buyers to stand out, making it easier for the AI to find similar users.

Why Keyword Sculpting Is the New Standard for Campaign Health

Strategic decision-making in 2026 begins with calibrating the level of aggressiveness an advertiser should employ. This is not a one-size-fits-all metric but a stance dictated by specific business goals. For accounts with limited budgets, a high level of aggression is necessary, requiring practitioners to scan reports weekly to remove any query with even a slight deviation from target intent. This protective posture ensures that every cent is directed toward high-probability conversions. In contrast, for those scaling or entering new markets, a permissive stance is required to provide the algorithm with the “exploration” data it needs to find new conversion pockets.

Between these extremes lies the strategic middle ground where most enterprise accounts operate. Here, negatives are added only when there is statistically significant proof of irrelevance. This balanced approach avoids the trap of “over-negating,” which can inadvertently choke off valuable traffic. By aligning the aggressiveness of exclusions with the broader financial goals of the organization, managers ensure that their technical tactics are supporting, rather than hindering, their strategic ambitions. Moreover, this calibration must be revisited quarterly as market conditions and competitive landscapes shift.

The Intentional Use of Match Types: A Tactical Choice

In the current era, negative match types do not mirror the behavior of positive keywords, making their selection a critical tactical choice for the seasoned professional. Negative exact match has become the tool for surgical removal, allowing managers to block specific high-cost, long-tail queries without risking the loss of valuable variations. It is the preferred method for removing specific competitors or highly specific product names that do not fit the current offering. This precision prevents the “collateral damage” that often comes with broader exclusion methods.

Negative phrase match, however, is best suited for blocking “intent modifiers” that signal a non-buying intent across various queries. Words like “tutorial,” “free,” or “how-to” are frequently attached to high-volume searches but rarely lead to a direct sale for premium service providers. By applying these as phrase matches, an advertiser can clean up thousands of potential queries with a single entry. Meanwhile, negative broad match remains the “nuclear option” for words fundamentally incompatible with the brand, such as “jobs” or “reviews.” This ensures that regardless of where these terms appear in a search string, the ad will remain hidden, preserving the brand’s reputation and budget.

Defining the Performance-Based Trigger for Exclusion

Consistency in when to add a negative keyword is as important as what is added, ensuring every exclusion has a performance-based justification. Modern account management relies on data-driven thresholds, such as setting a Cost-Per-Acquisition (CPA) ceiling. A query might be flagged for exclusion if it spends three times the target amount without producing a conversion. This removes the emotional guesswork and prevents “gut feelings” from dictating account structure. It also creates a transparent audit trail, allowing stakeholders to understand exactly why certain traffic was deemed unworthy of investment.

However, there is a lingering danger in proactive blocking. Adding negatives simply to appear “active” in the account can be counterproductive, potentially blocking the next big growth opportunity before the data has a chance to mature. The window of data used to make these decisions—whether 30, 90, or 365 days—significantly impacts the account’s trajectory and responsiveness to market shifts. A 90-day window is currently the gold standard, providing enough data to account for weekly fluctuations while remaining responsive enough to protect the budget from seasonal downturns or sudden shifts in consumer behavior.

Balancing Campaign Sculpting with Algorithmic Trust

This final strategic pillar addresses the tension between human control and machine learning’s access to user context. High sculpting involves using extensive lists to force the algorithm to keep brand, non-brand, and competitor traffic strictly separated for maximum transparency. This is essential for companies that need to report on specific department performance or those with highly segmented product lines. In contrast, low sculpting involves trusting the platform’s access to user history and session data to decide which query matches which keyword, often leading to surprising but profitable discoveries.

The trend in 2026 is moving toward a synthesis of these two schools of thought. While AI is undeniably faster at processing data, its goals are often aligned with platform-defined conversions rather than actual business outcomes. By utilizing more sculpting, human managers ensure the algorithm stays within the bounds of profitable intent. This hybrid approach uses AI to surface potential exclusions based on performance data, while the human expert makes the final decision. This maintains the necessary speed of modern commerce without sacrificing the strategic oversight that only a person with a deep understanding of the brand can provide.

Expert Perspectives on the Risks and Rewards of Constraint

Industry leaders have reached a unified understanding of how to manage the intersection of human strategy and machine learning. They warn that over-constraint can be a silent killer of performance; “Smart Bidding” needs a certain amount of “room to breathe” to find high-value conversions that might look imperfect on paper but represent a searcher’s ultimate need. Experts emphasize that “intent is messy,” and a query that does not literally match a product description may still lead to a loyal customer. This realization has shifted the focus from literal word-matching to broad intent-blocking.

To move forward, account managers transitioned from reactive checklists to a system of performance triggers and AI-assisted auditing. They established frameworks that treated every negative keyword as a training signal, teaching the AI what “good” traffic looked like for their specific business models. By prioritizing intent over keywords and applying a final brand filter, these practitioners ensured that their exclusions defined the boundaries of digital growth without stifling opportunity. The focus shifted toward predictive management tools that previewed the impact of a negative keyword before it was applied, marking the definitive end of the “guess and check” era of digital advertising.