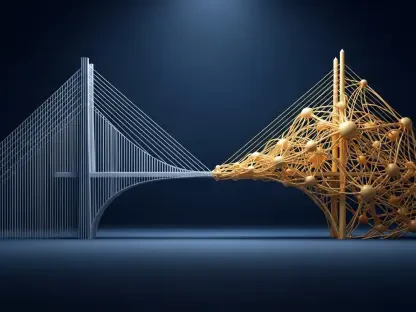

The thin line between a highly productive enterprise chatbot and a brand-destroying digital liability often comes down to a few milliseconds of automated decision-making. As generative models become deeply integrated into corporate workflows, the unpredictability of large language models has transformed from a technical quirk into a significant operational risk. Moonbounce, a startup led by industry veterans from the social media moderation space, has emerged to address this specific vulnerability. By introducing a sophisticated control layer between the model and the user, the platform attempts to civilize the often chaotic nature of probabilistic AI. This infrastructure represents a departure from basic filtering, aiming instead for a comprehensive governance system that scales alongside increasing machine intelligence.

The Shift: From Experimental AI to Enterprise Governance

The early days of generative AI were defined by a fascination with capability, but the current landscape demands a focus on reliability and compliance. Companies are no longer satisfied with “playground” environments; they require systems that can withstand the scrutiny of legal departments and regulatory bodies. This evolution has birthed a new category of technology designed to move past the generic safety filters provided by model creators like OpenAI or Google. These built-in guardrails are often too broad, failing to account for the specific ethical nuances or proprietary data constraints of a private enterprise.

In this shifting landscape, the focus has moved toward external governance engines that treat AI safety as a specialized infrastructure layer. This approach recognizes that the intelligence of a model is separate from the rules governing its behavior. By decoupling the “brain” from the “policeman,” organizations can update their corporate standards without having to retrain or fine-tune their entire underlying model. This modularity is essential for staying agile in a market where social expectations and legal requirements change monthly rather than annually.

Technical Components of AI Control Engines

Policy-as-Code Translation

At the heart of the modern moderation stack is the concept of policy-as-code. Traditionally, corporate guidelines exist in dense PDF documents that human moderators interpret with varying degrees of consistency. Moonbounce and its contemporaries have replaced this subjective process with a machine-readable translation layer. This technology takes high-level directives—such as “do not provide medical diagnoses” or “remain neutral on political candidates”—and converts them into mathematical constraints. This ensures that the AI’s output is checked against a rigid logical framework, eliminating the “drift” that often occurs when models are asked to self-monitor.

Real-Time Intercept and Monitoring

The most critical technical hurdle in this field is the latency required for real-time intervention. A safety layer is useless if it slows the user experience to a crawl. Advanced moderation infrastructure utilizes high-speed interceptors that scan both incoming prompts and outgoing tokens as they are generated. By using lightweight, specialized models to “vibe check” the primary LLM’s output in milliseconds, these systems can kill a response mid-sentence if it begins to veer into restricted territory. This active enforcement is a significant step up from passive auditing, which only identifies violations after they have already reached the end user.

Emerging Trends in AI Safety and Regulation

The trajectory of this technology is being heavily influenced by a global wave of legislative action, most notably the EU AI Act and tightening standards in North America. We are seeing a trend where the burden of proof regarding AI safety is shifting from the technology provider to the technology deployer. Consequently, infrastructure that provides a “paper trail” of safety decisions is becoming a requirement for insurance eligibility. The market is moving toward a standard where every interaction must be logged, categorized, and verified against a specific compliance framework to mitigate liability.

Real-World Applications Across Industries

In the financial sector, where a single incorrect investment tip can lead to massive fines, AI moderation infrastructure acts as a vital fiduciary shield. Banks use these control layers to ensure that their customer-facing bots never cross the line into unauthorized financial advice. Similarly, in healthcare, these systems act as digital chaperones, ensuring that patient interactions stay within the bounds of informational support rather than clinical diagnosis. These use cases demonstrate that the technology is not just about blocking “bad words” but about enforcing professional boundaries in high-stakes environments.

Challenges to Widespread Adoption and Performance

Despite the rapid progress, significant hurdles remain, particularly regarding the phenomenon of “jailbreaking.” Sophisticated users constantly find ways to bypass safety filters using complex prompt engineering or linguistic traps. Moderation engines must engage in a constant cat-and-mouse game, updating their detection logic to recognize these adversarial patterns. Furthermore, there is a performance trade-off; as safety checks become more rigorous, they risk stifling the creativity and utility that make generative AI valuable in the first place. Striking the balance between a “safe” bot and a “useful” one remains a primary engineering challenge.

The Future Outlook of Moderation Infrastructure

Looking ahead, the next frontier for moderation will be multimodal enforcement. As AI begins to generate video, audio, and complex code in real-time, the control layers must evolve to understand more than just text. We should anticipate a move toward “semantic firewalls” that can interpret intent across different media types simultaneously. This will likely lead to a more decentralized safety ecosystem, where specialized safety agents from different providers work in tandem to govern a single AI interaction, creating a more robust and objective safety net.

Assessment of the Current AI Governance State

The development of robust AI moderation infrastructure has successfully transformed the generative landscape from an unpredictable experiment into a viable corporate utility. While the underlying models provide the raw power, it was the emergence of control layers like Moonbounce that provided the necessary safety belts for mainstream adoption. The industry realized that the responsibility for ethical output could not rest solely on the shoulders of foundation model developers. Moving forward, the integration of these safety layers should become as standard as SSL certificates are for web traffic. Stakeholders must now focus on refining the granularity of these controls and ensuring they do not become bottlenecks for innovation. The transition from reactive filtering to proactive, code-based governance has fundamentally secured the enterprise’s place in the AI-driven future.